Visual Wake Word (VWW)

Visual Wake Word (VWW)

Overview

Motivating examples of use cases

- Common image recognition examples

- Ring door bell

- Detect when someone shows up at the door, or

- Recognize whether a specific person is at the door.

- Facial recognition on iPhone/iPad/Surface

- Ring door bell

- Can we untether the device?

- Small power/computation consumptions, no wiring necessary

- No construction licensing/permit needed for deployment.

- Example:

- Recognize whether there are no people in the room in order to turn off the lights. to deploy a TinyML device in an office which

- Smart glasses that can process the interesting visual cues that are coming in (catching rare items when shopping, noticing hard-to-detect road signs, …)

Challenges

- Performance-related aspects:

- Latency

- Bandwidth.

- Capabilities:

- Accuracy

- Personalization

- Data security and privacy

- Resource constrains

Challenges

Bandwidth and Latency

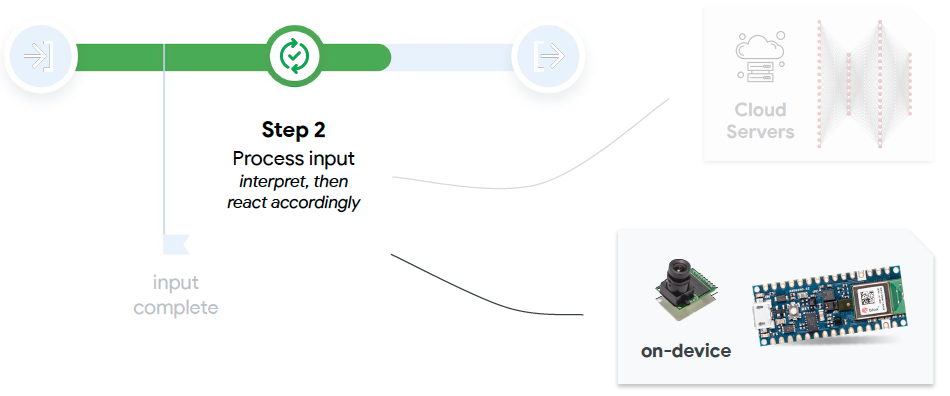

- In a cascading architecture, a tinyML device can perform the initial

interesting itemdetection, then offload the subsequently more compute intenstive task to the cloud if aninteresting itemis detected.

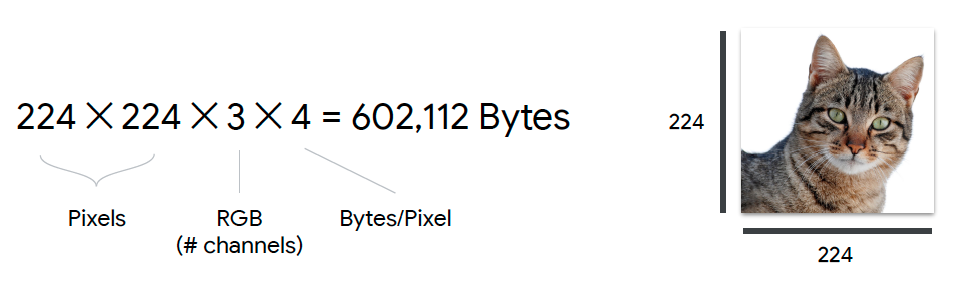

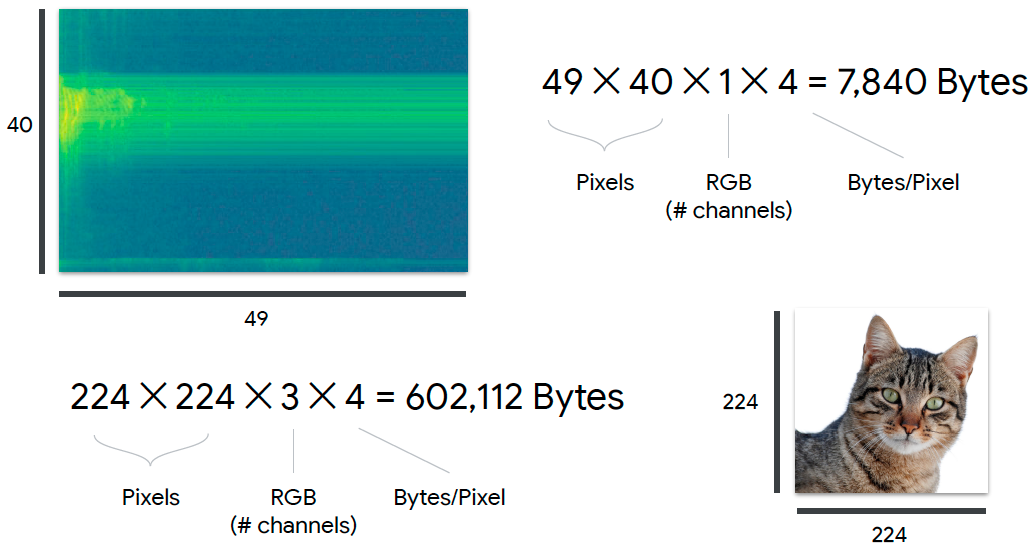

Example: Is there a cat knocking on my door?

- How much data are we sending?

- An image in neural networks is around 224 by 224, sometimes 300 by 300 pixels.

- Three channels (R, B, G) per pixel.

- Each channel requires 4 bytes for representation.

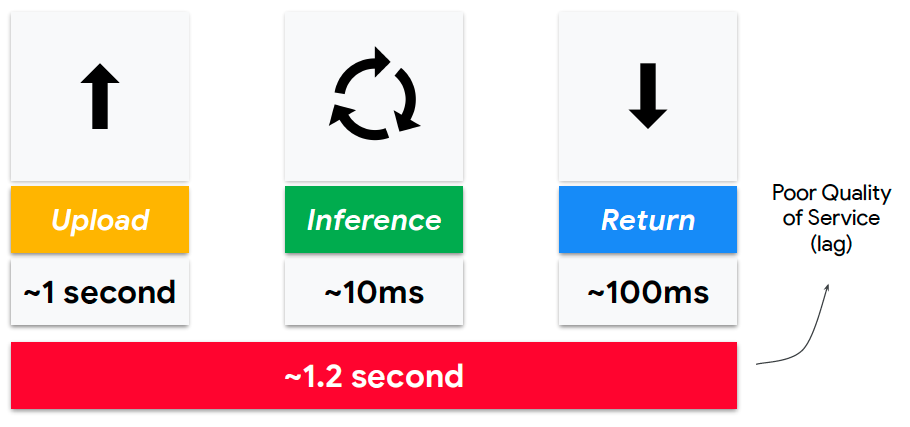

- How long does it takes?

- Ping: 25ms (the latency just to be able to send something to the local gateway and be able to get a response back)

- Download speed: 35 megabits per second.

- Upload speed: 4.62 megabits per second ~

570 KBytes per second - Image size: 602,000 bytes of data ~

602 Kbytes - Take one second!

- Actual performance

- The cat could be gone!!!!

- Comparing to keyword spotting

- KWW is at least two order of magnitudes smaller

- Audio signal produces significantly more data than audio signal.

- Higher latency

- Higher power consumption

- Lower user satisfaction

Capability constraints

- What if we don’t go to the cloud (no more latency and bandwidth issue!!!)

- Constraints:

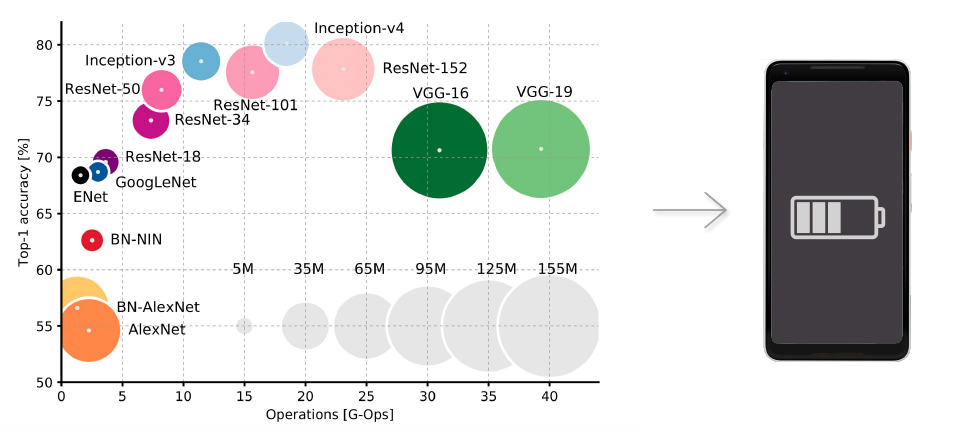

- Microcontroller = processing latency (need smaller models)

- Microcontroller = memory limits (need smaller models)

- How do these constraints impact performance:

- False positive

- False negative

Data collection and processing

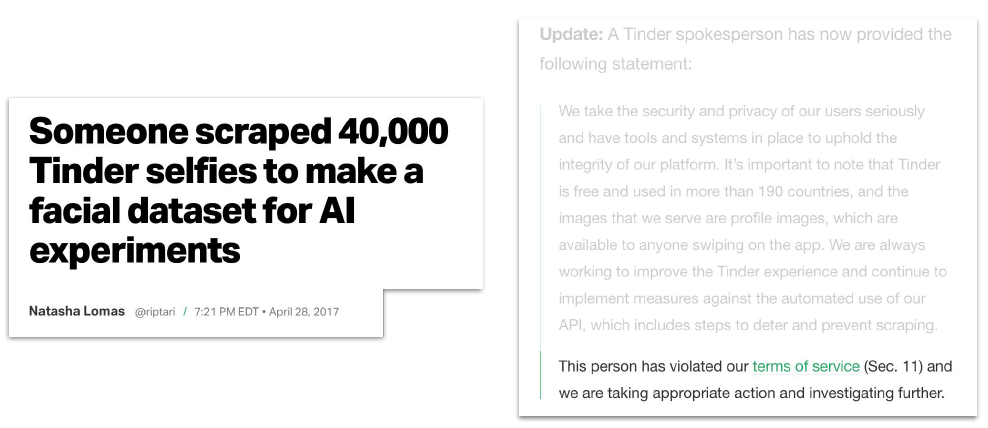

- Be very careful with collecting images

- This means anyone else cannot use this data to build the AI model as well.

- If data is clean/legal/valid, it is possible to reuse existing data to generate a subset of specific training data.

Example: Visual Wake Words Dataset

- Google Research’s Paper: Visual Wake Words Dataset

- Relabeling instances of COCO dataset

- “Each image is assigned a label 1 or 0. The label 1 is assigned as long as it has at least one bounding box corresponding to the object of interest (e.g. person) with the box area greater than 0.5% of the image area.”

-

Person: 1 -

Not-person: 0

- Somewhat similar to KWS, but you don’t have to create datasets from scratch!!!

- Powerful concept, as long as data usage license is permissive!!!

- Is that data set really going to meet the needs of your particular TinyML application?

- Balanced

- Relevant

- Quality

- Quantity

VWW Model

Recall: constraints and trade-offs

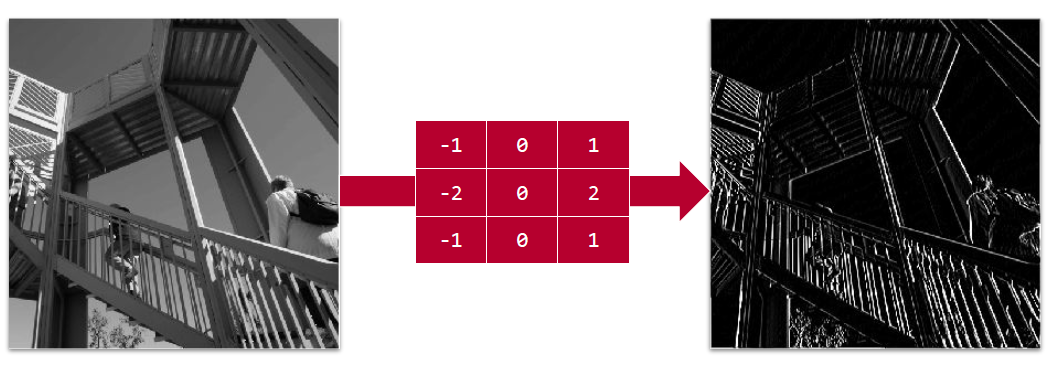

Recall: convolutions

- Convolutions on gray-scale pictures

- Convolutions on colored images

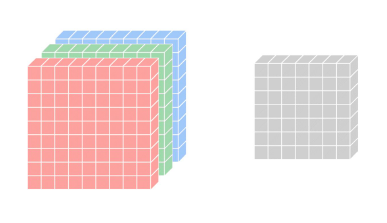

- Depthwise

- Input Feature Map: 8x8x3 (widthxheightxchannels)

- Kernel: 3x3x3 (each channel uses 1 filter)

- Final output: 7x7x1 tensor

- Math generalization

- $D_F$ : dimension of a square input feature map

- M: number of input channel

- $D_K$ : dimension of filter matrix (square)

- N: number of output channel

- Total number of multiplication: ${D_K}^2 * M * {D_F}^2 * N$ (a lot!!!)

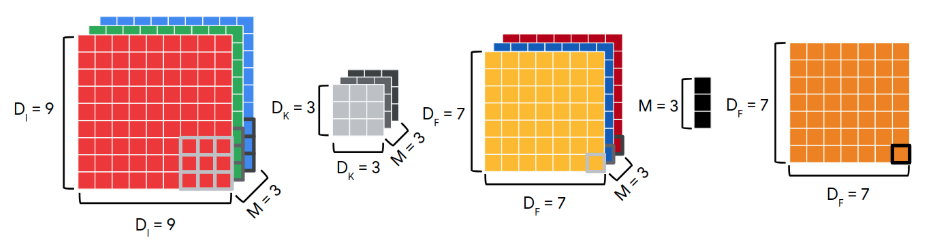

- Depthwise separable convolutions

- MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Application

- Run time: $M * {D_K}^2 * ({D_F}^2 + N)$

- The more filters we use and the larger the kernels are, more multiplications we can save.

- Far fewer parameters to store.

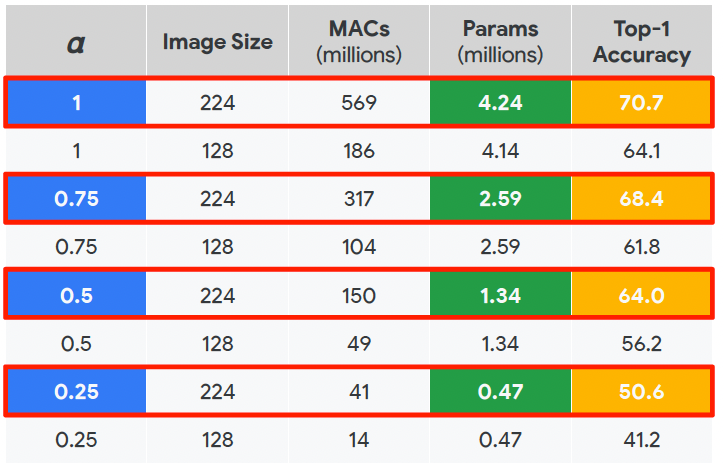

- Performance tradeoff

- Depth multiplier to reduce model size further: $\alpha * M * {D_K}^2 * ({D_F}^2 + N)$

Training model

- Training from scratch is expensive.

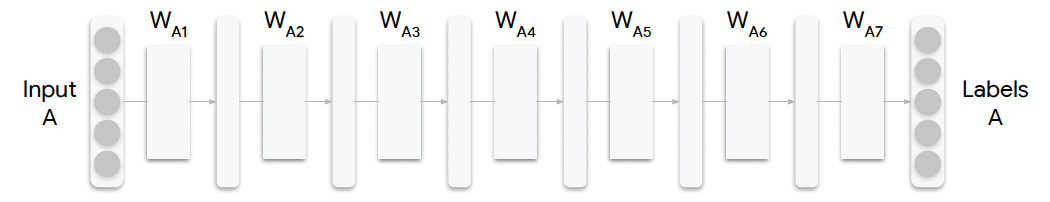

Neural network of a model

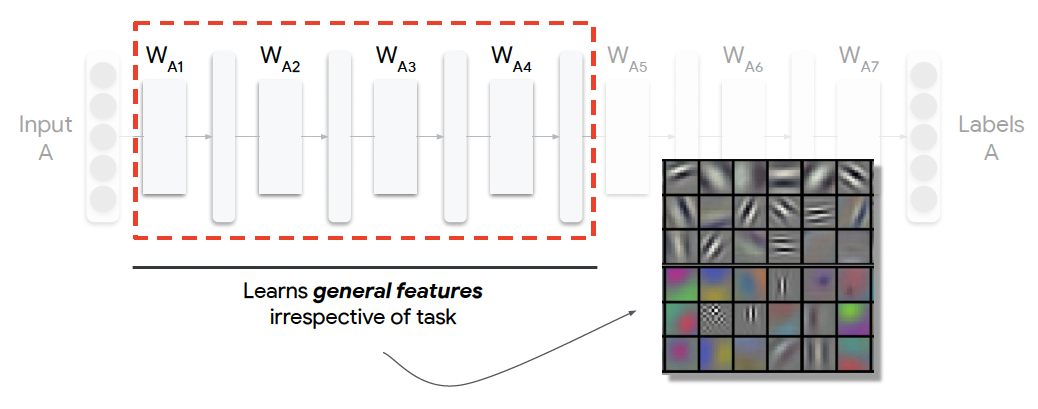

Neural network of a model: earlier layers

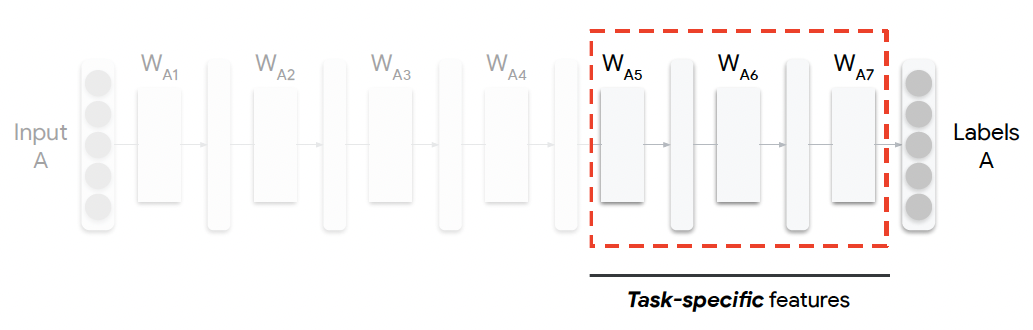

Neural network of a model: latter layers

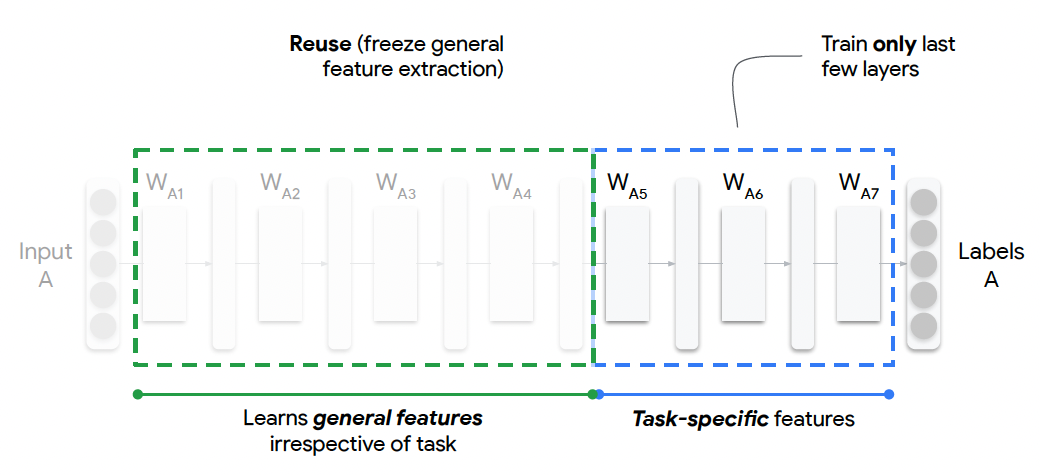

Conclusion

- Reuse (freeze general feature extraction)

- Train only last few layers for task-specific features

Hands-on notebook

- Make a copy of the following notebook Visual Wake Word by going to File/Save a copy in Drive.