Data Engineering

Data Engineering

Overview

What is data engineering

- A supervised AI is trained on a corpus of training data

- Data engineering in AI is all about datasets

Good data is necessary for accuracy

What problem are you trying to solve

- Your data must contain useful features

- Can a human (expert) distinguish between examples of each class?

- How will you measure performance?

Both quantity and quality wil influence your model’s performance

- Wide distribution of training examples

- Accurate labels

- Sufficient class balance

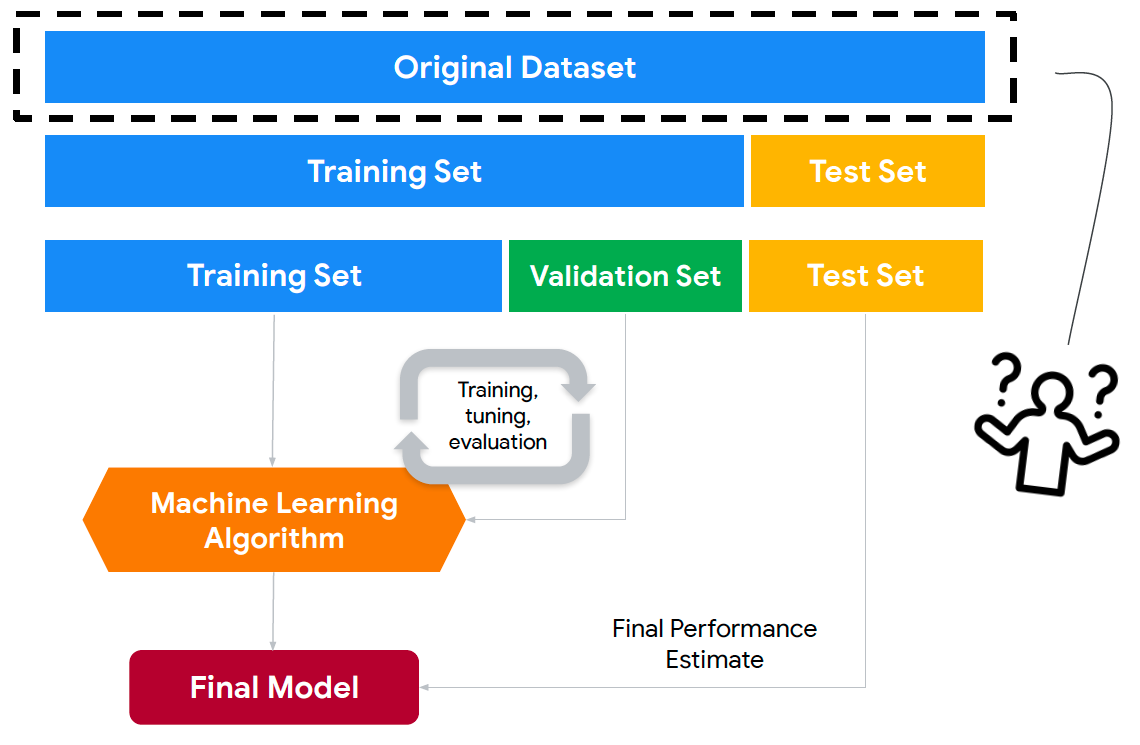

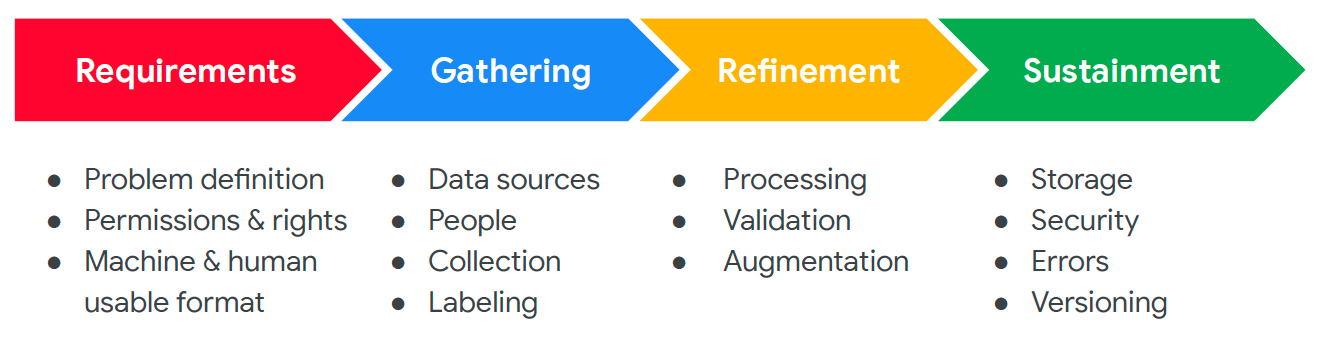

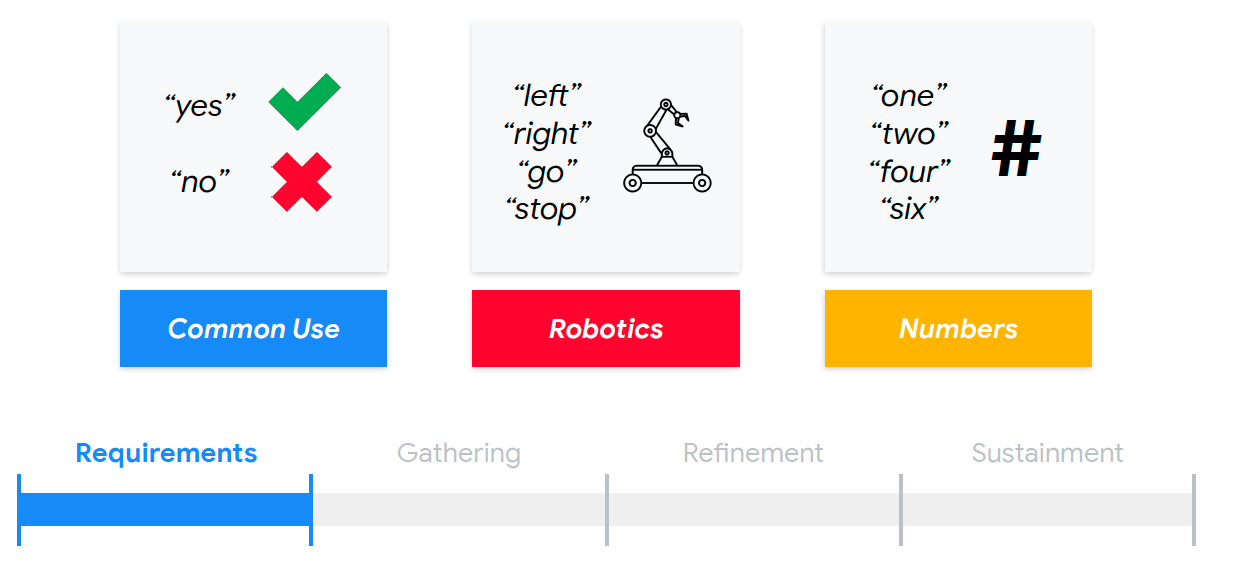

Key steps in a data engineering process

Requirements

- Problem definition

- Machine and human usable format

- Permissions and rights

Question: Permissions and rights

- Where does your data originate?

- Open?

- Copyrighted?

- Licensed?

- Product users?

- What’s yurs and what’s NOT yours

- Licenses:

- Apache

- BSD

- Creative Commons

Gathering

- People

- Collection

- Labeling

- Data sources

- Sensors

- Crowdsourcing

- Product users

- Paid contributors

Refinement

- Processing

- Augmentation

- Validation

Question: Validation

- Some data is unusable

- How will you verify the data you collected?

- Manually (time, cost)

- Automation

- Domain expertise

- disputes/disagreements?

- How will you verify the data you collected?

Sustainment

- Storage

- Security

- Errors

- Versioning

Question: Versioning

- Your dataset will evolve

- Augment missing data

- Expand user-base

Datasets require significant effort

- These massive ML datasets are constructed by hand

- Common Voice: 5000+ hours of spoken audio

- Common Objects in Context (COCO): 2.5M+ labeled images

- ImageNet: 4M+ labeled images

- Waymo: 1950 20-seond driving segments

- KITTI 360: 73KM+ of annotated driving data

- How to build your own dataset?

Data Engineering for Key Word Spotting

Reminder: the data engineering process

Requirements

- Collecting data based on usage

Gathering: Data Collection

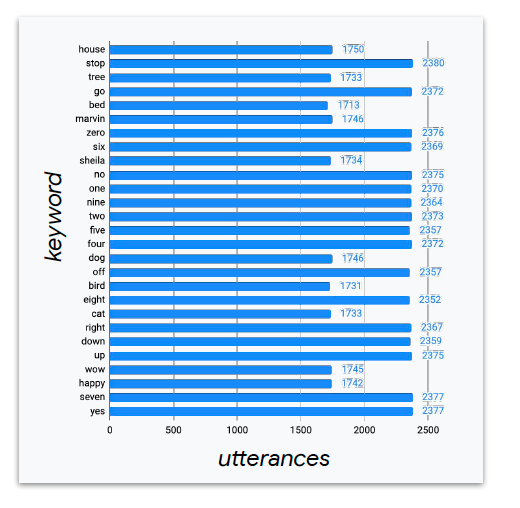

- 2,618 volunteers

- consented to have their voices redistributed

- variety of accents

- More than 1000 samples for each keyword

- Browser-based recording

Refinement: Data Validation

- Some data is unusable

- Too quiet, wrong word

- Started with automated tools

- Remove low volume recordings

- Extract loudest 1s (from 1.5s examples)

- All 1105,829 remaiing utterances mannually reviewed through crowdsourcing

Sustainment

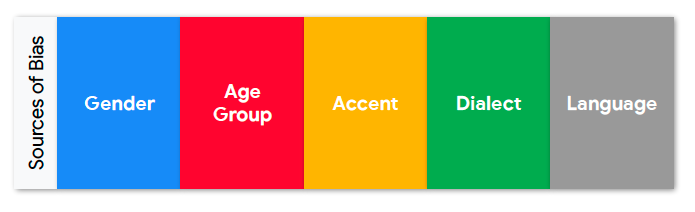

- Sources of bias

Collecting your custom dataset

- Requirements

- How much data is needed?

- What are accepteable false positive and false negative rates?

- What are the impact of errors?

- Gathering

- What are possible recording issues?

- Too short or clipped utterances

- Too quiet

- Can we augment this with background noises?

- Kitchen

- Car

- TV/Radio in the background

- Crowded room

- What are possible recording issues?

Building your own dataset

Plan B

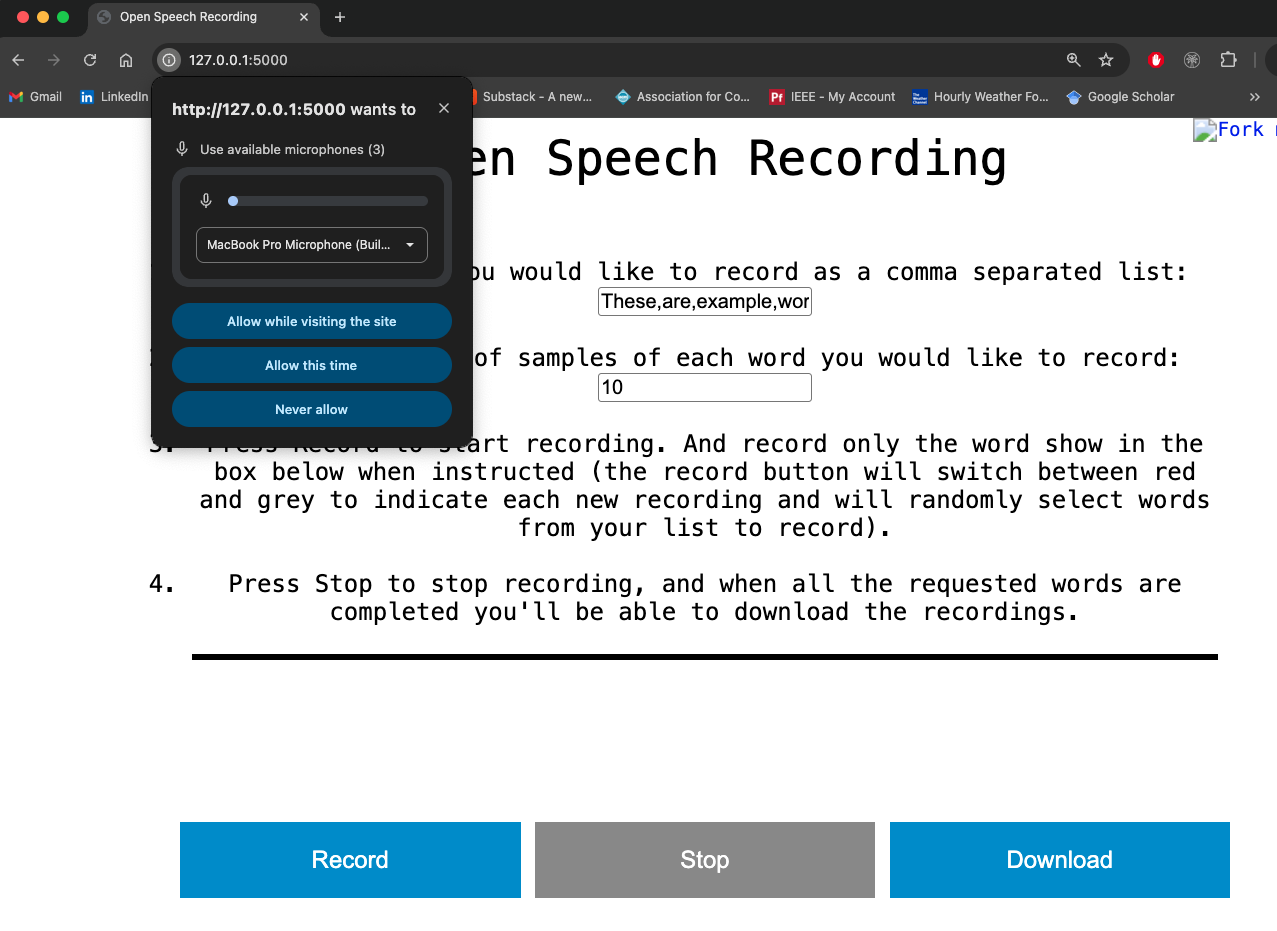

- Use Harvard’s Open Speech Recording Link

- Only use this if you are not able to setup the recording app locally.

Setup local recording app

- Run the following to setup and deploy the recording app

- For Windows users, replace

exportwithset

1

2

3

4

5

6

git clone https://github.com/ngo-classes/open-speech-recording.git

cd open-speech-recording

git submodule update --init --recursive

pip install flask

export FLASK_APP=main.py

python -m flask run

- Visit http://127.0.0.1:5000 on an incognito browser window

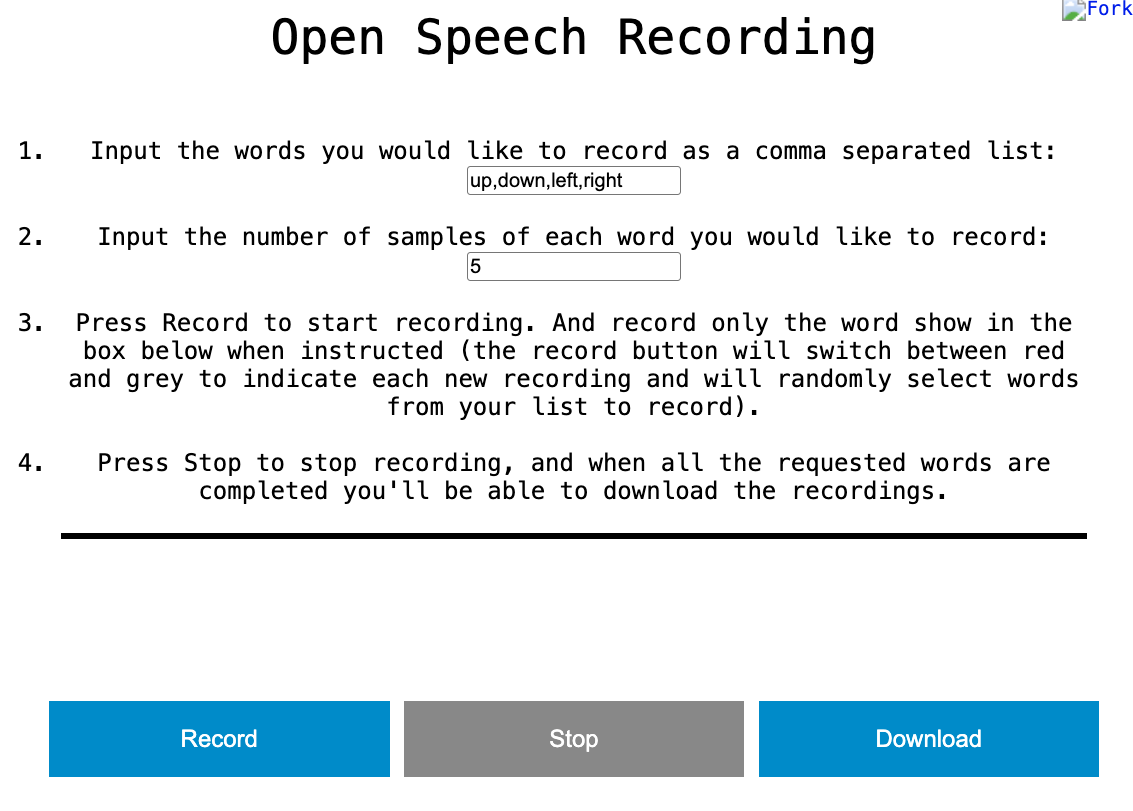

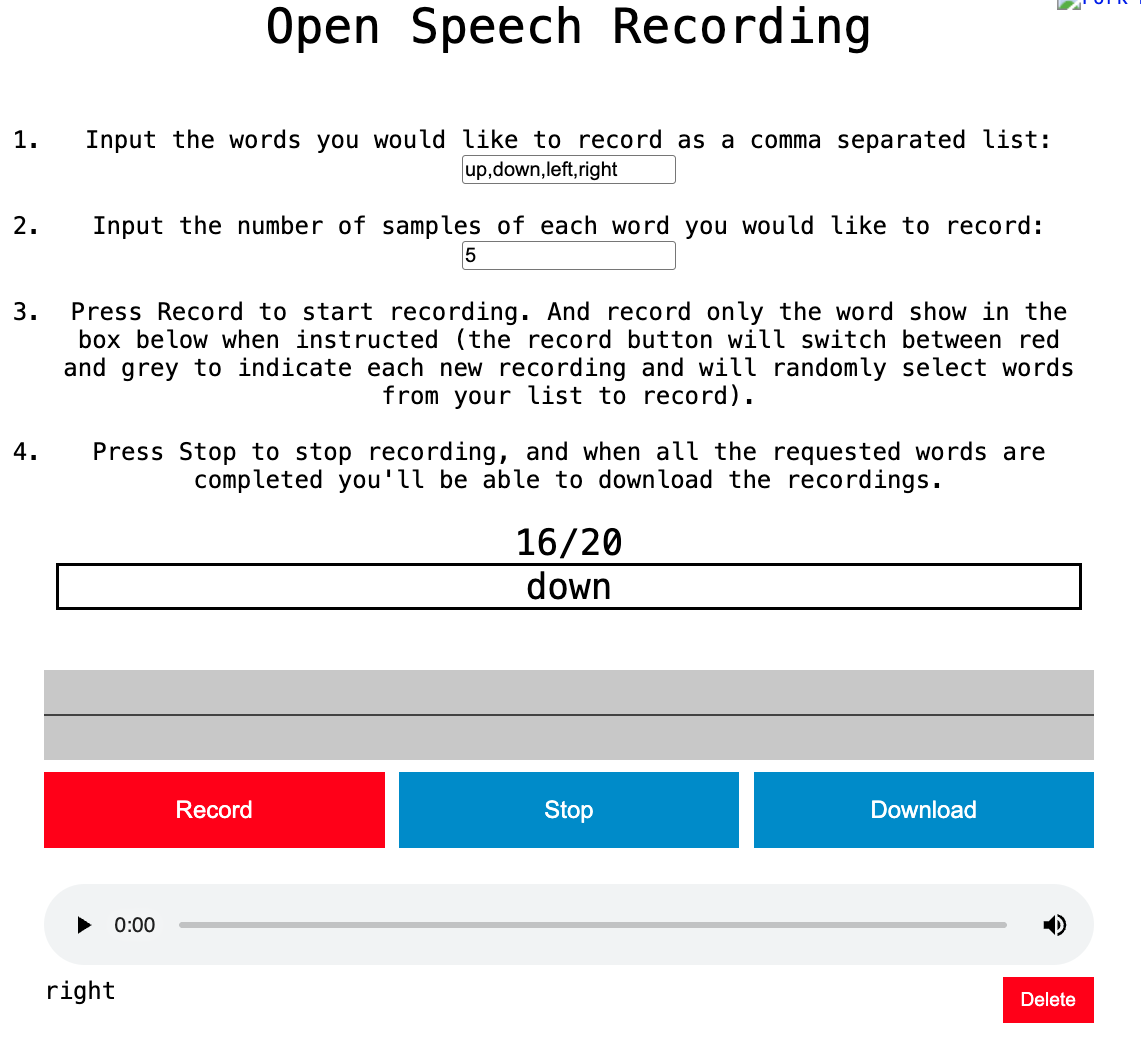

Collecting data

- Edit Box 1 to include the words you want to use

- Edit Box 2 to include the number of samples of each word

- Press Record to start the recording process

- Practice a few times so that you can time the recording just right (red/gray record button)

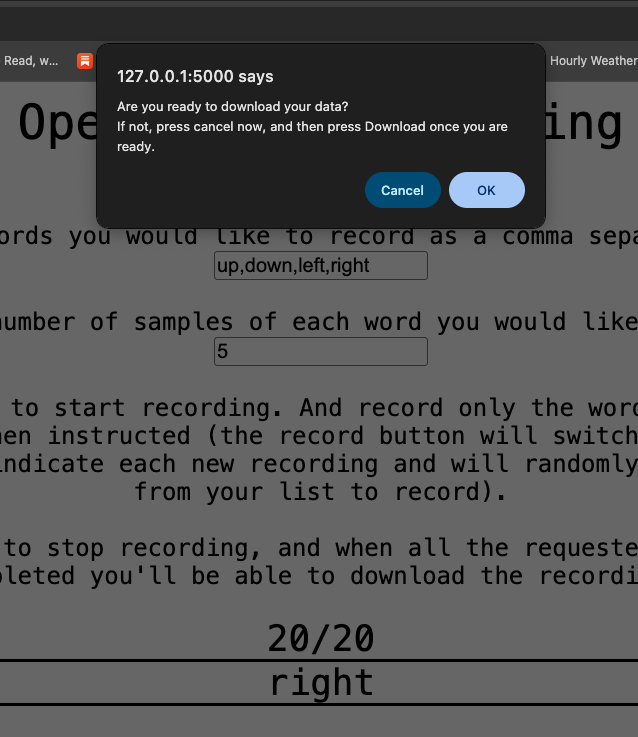

- Press OK to download the data

- Data are download as .ogg format inside the same directory