Deployment of KWS

Deployment of KWS

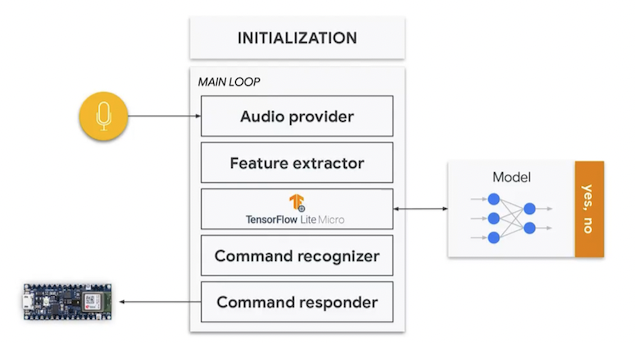

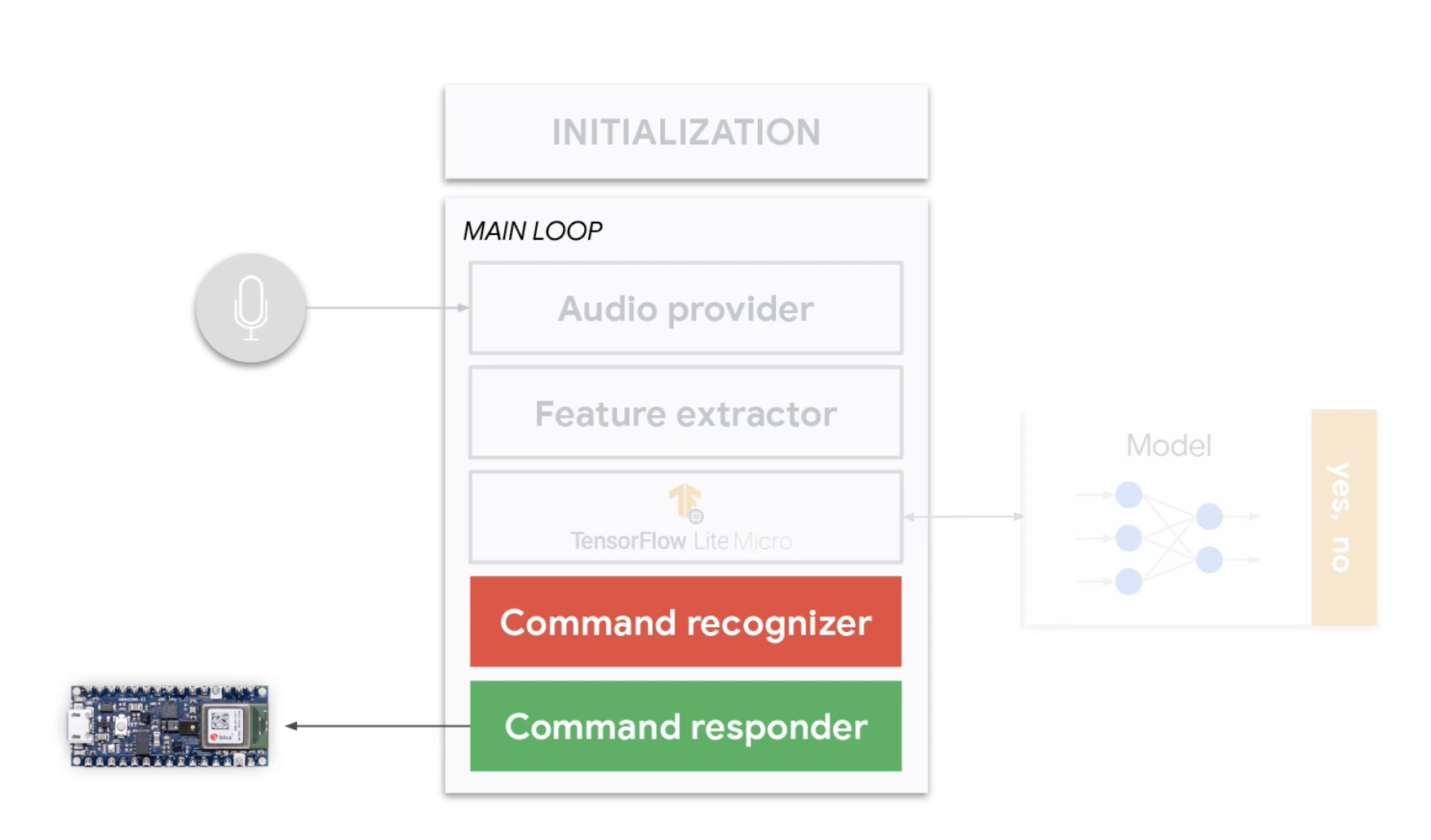

Overview

Keyword Spotting Components

Arduino Example

-

- File/Examples/Harvard_TinyMLx/micro_speech

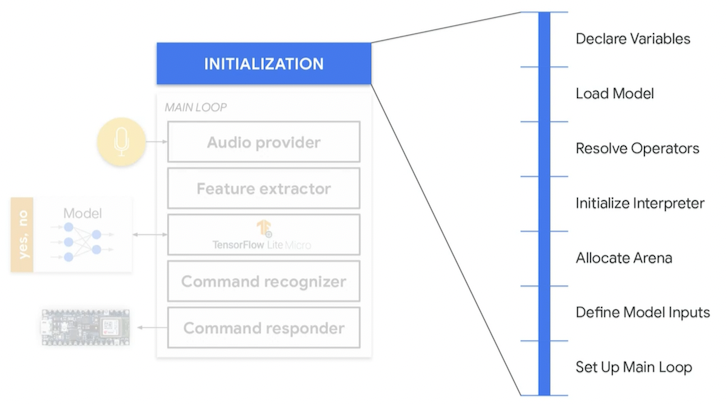

Initialization

Overview

- General steps that need to be done for any ML application deployment

Steps

- Declare variables

-

micro_speech.inonamespace- TFL-related variables

- Tensor Arena Size

- …

-

- Load model

-

micro_features_model.cpp- Byte array describing the model

-

micro_speech.inosetup()- Load model into a

modelvariable

-

- Resolve Operators

-

micro_speech.inosetup()- Use

AddOPERATORwithOPERATORis the name of the operator to be added. - List of default Ops

-

- Allocate/instantiate the Interpreter

-

micro_speech.inosetup()

-

- Allocate memory for the TensorArena

-

micro_speech.inosetup()

-

- Define model inputs

-

micro_speech.inosetup()

-

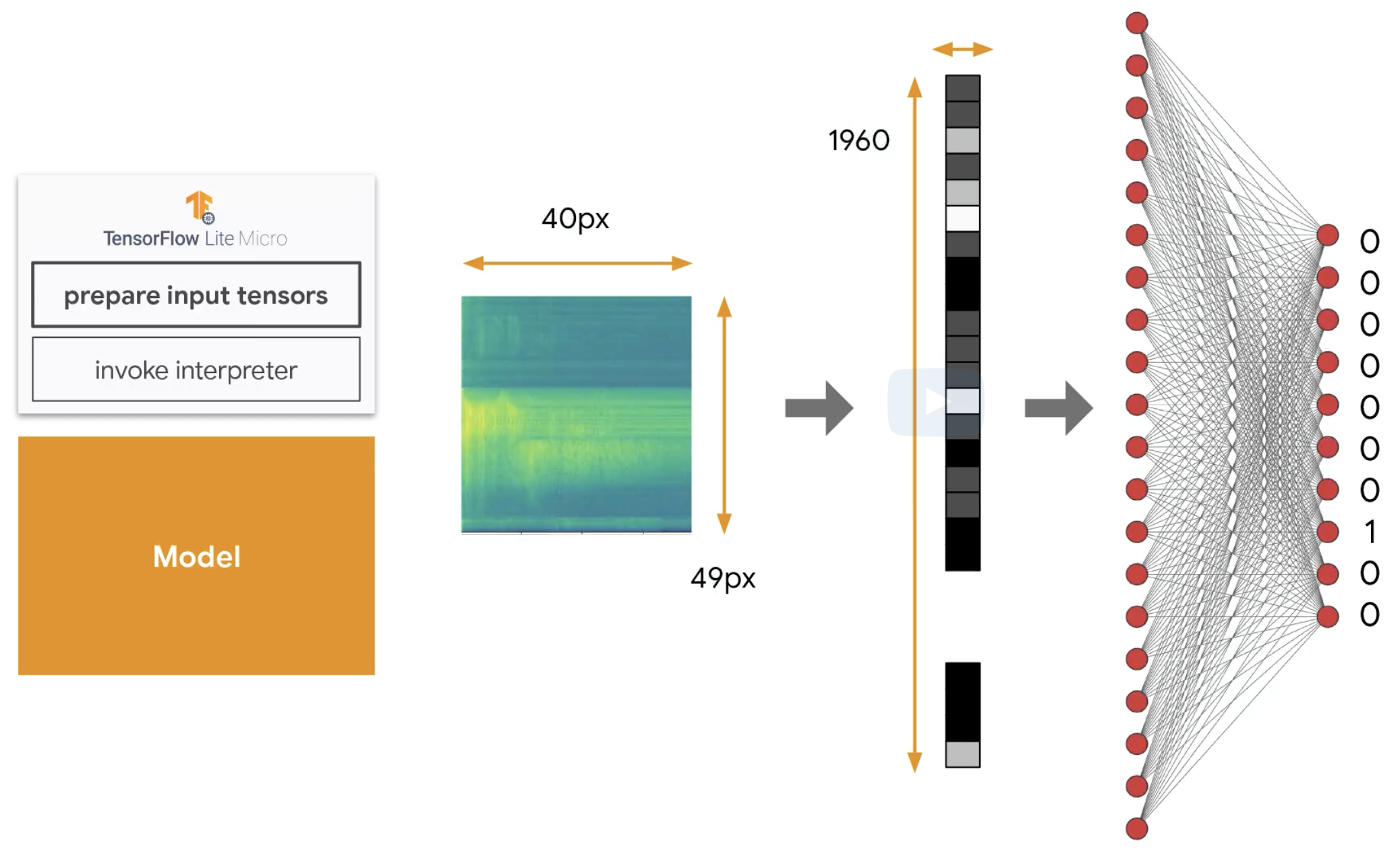

model_input->dims->size- See

kws-training-...notebook. -

model_inputis theFLATTENED_SPECTROGRAM_SHAPE - The input has two dimensions, and the first one is simply a wrapper with value of 1 (flattened)

- The second dimension is the size of the spectrogram (see the kws-training notebook)

-

FEATURE_BIN_COUNT = 40: 40 -

OVERLAPPING_WINDOWS = window_counter(CLIP_DURATION_MS, int(WINDOW_SIZE_MS), WINDOW_STRIDE): 49

-

-

micro_features_micro_model_settings.hconstexpr int kFeatureSliceSize = 40;constexpr int kFeatureSliceCount = 49;-

FEATURE_BIN_COUNT: kFeatureSliceSize -

OVERLAPPING_WINDOWS: kFeatureSliceCount

- See

-

- Setup Main loop

-

micro_speech.inosetup()

- Setting up the feature provider:

static_feature_provider- Accesses the audio buffer and generates spectrograms.

- After inference, we go to recognize command:

static_recognizer- Recognize commands based on inference results and facilitate device responses.

-

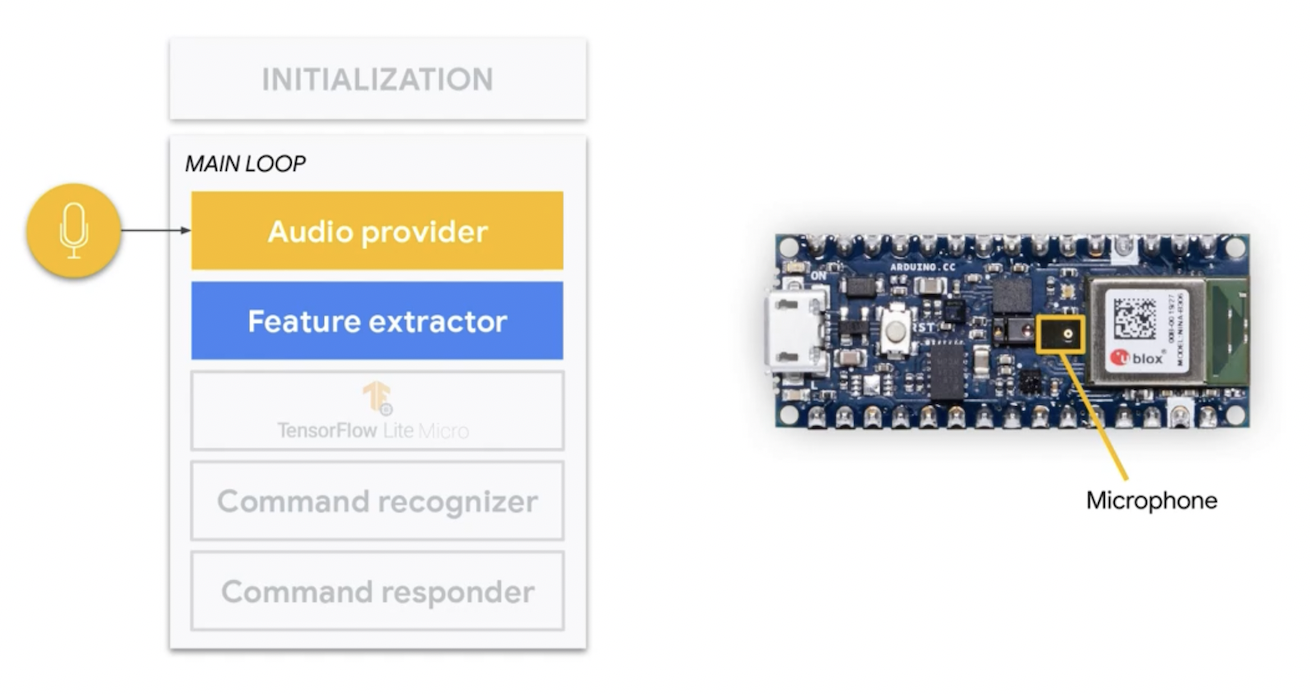

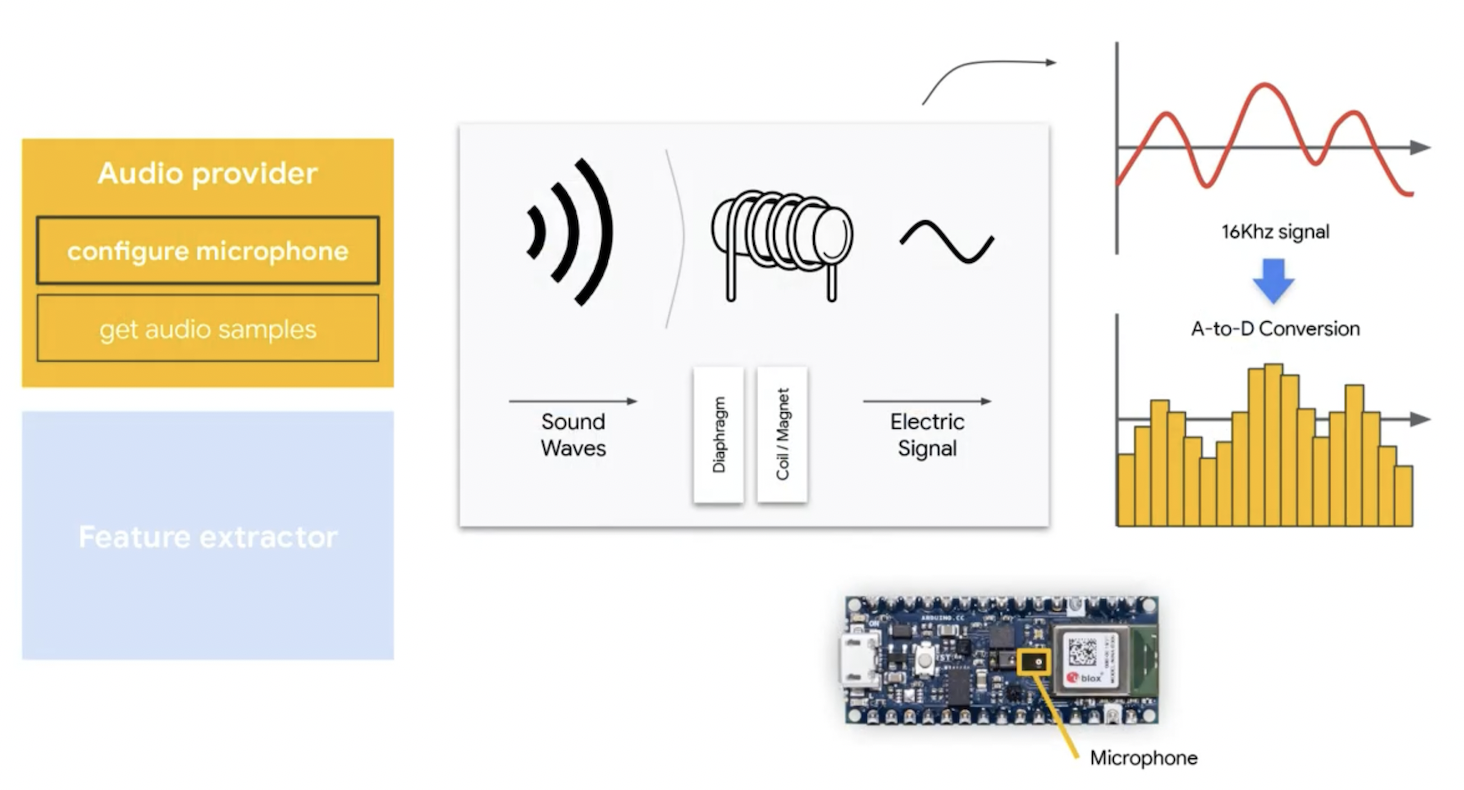

Pre-processing

Overview

-

micro_speech.inovoid loop()

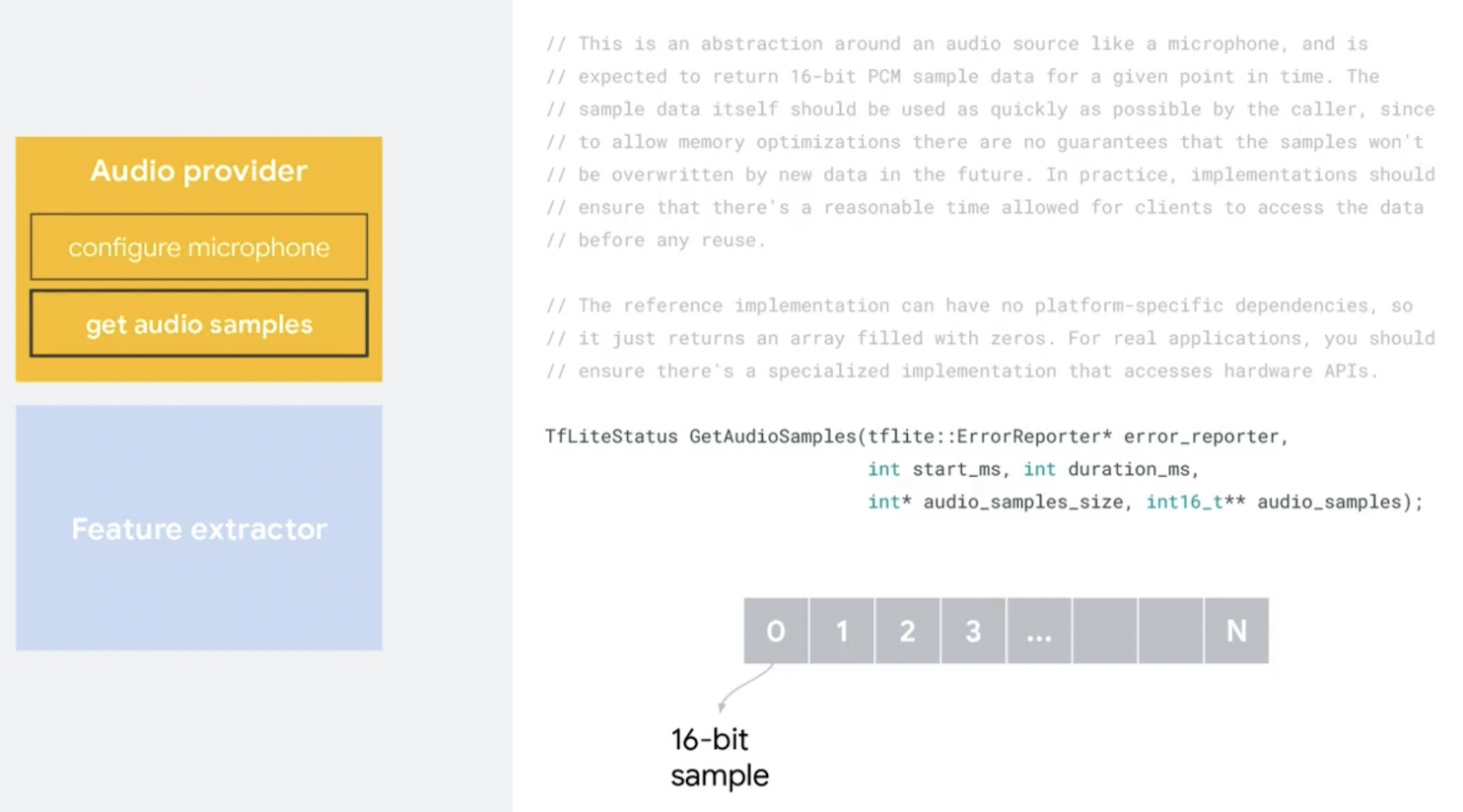

Audio provider

- Continuously get audio signals in from the microphone on the Arduino and convert that into a digital representation that can then be converted into a

spectrogramthat is consumed by neural network. -

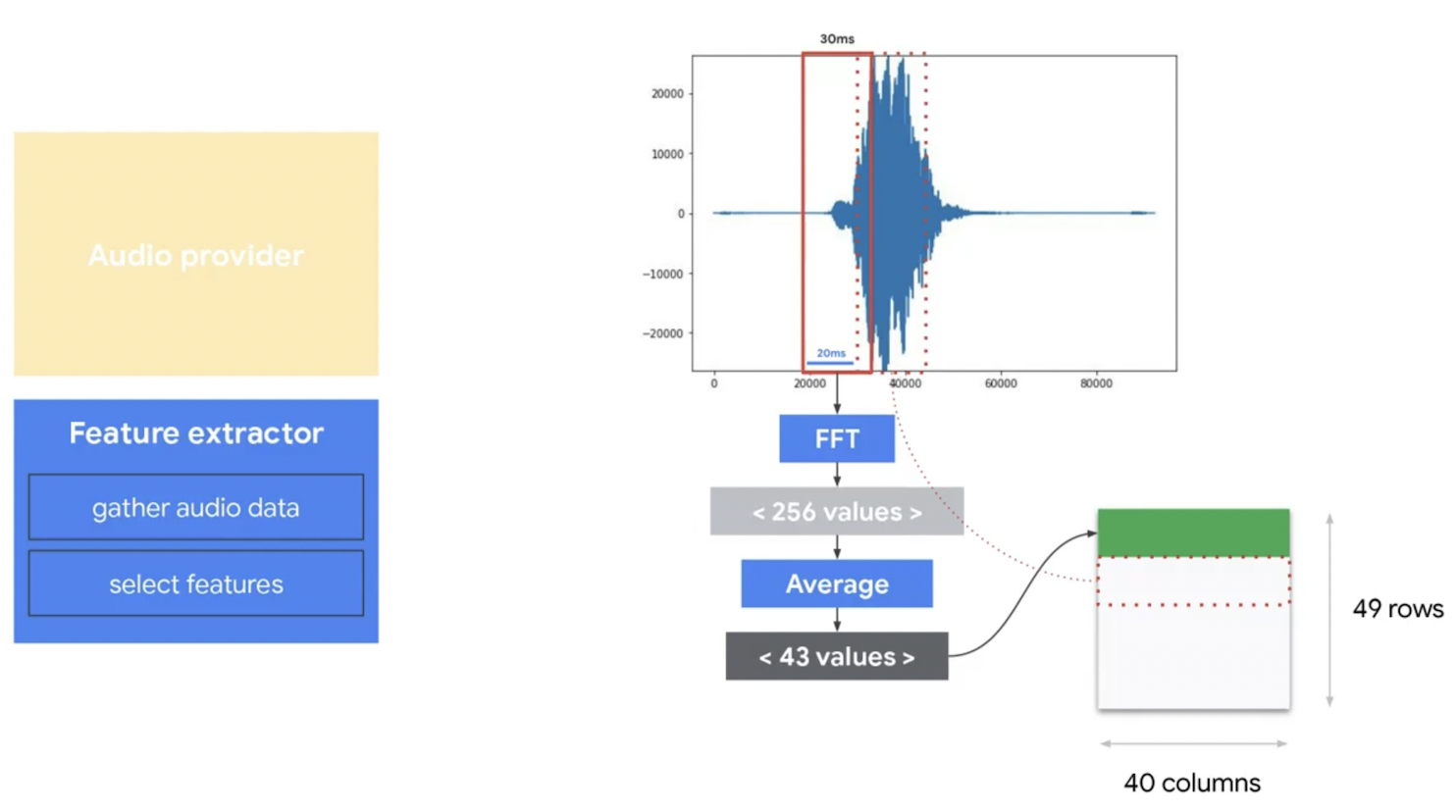

void loop()/PopulateFeatureData: right click then go to definition- goal: get the audio samples in a spectrogram format.

- Previous time is the last time PopulateFeatureData is called.

- Current time is the actual time that records as of right now.

- Identify (old) slices to drop.

- Identify new slices to calculate information.

-

GetAudioSamples-

InitAudioRecording/CaptureSamples

-

Feature Extractor

-

GenerateMicroFeatures- Unlikely need to be modified.

- Perform FFT transform on audio sample data then generate the MFCC and extract the spectrogram data.

Inference

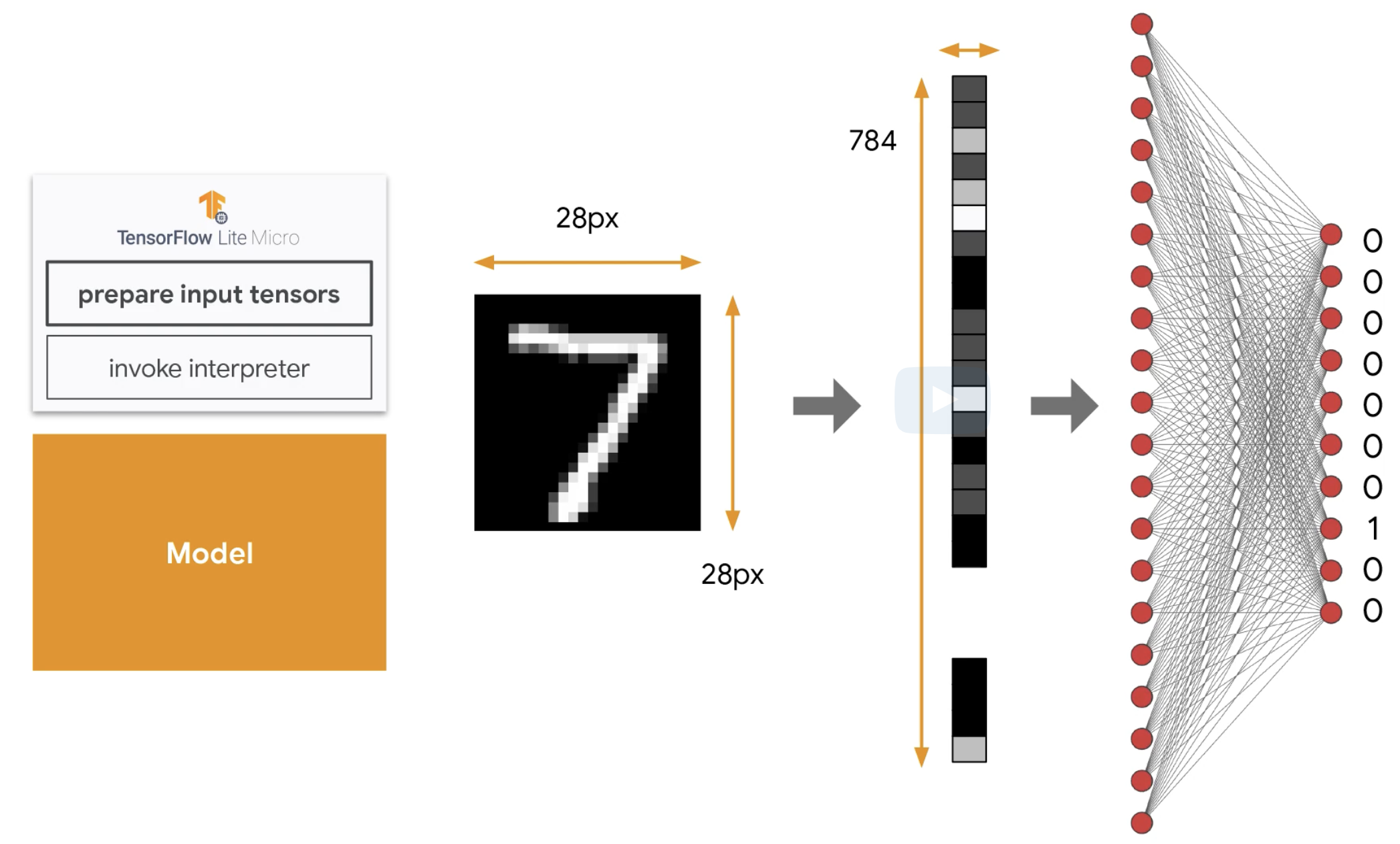

Recall: MNIST data

Spectrogram data

Inference process

- Copy collected audio data into feature buffer

- Manual loop (no fancy

memcopy!)

- Manual loop (no fancy

- Invoke interpreter

Post-processing

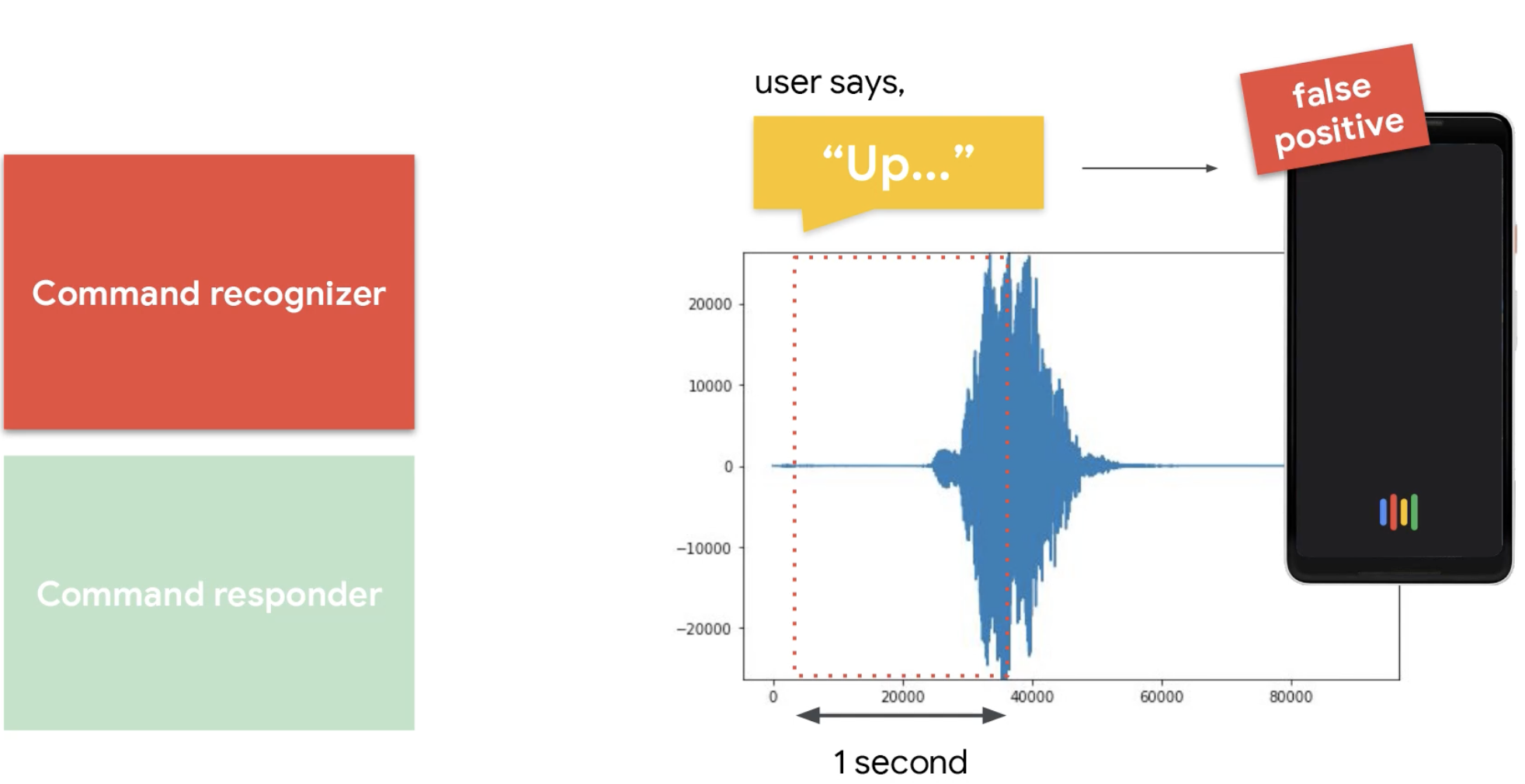

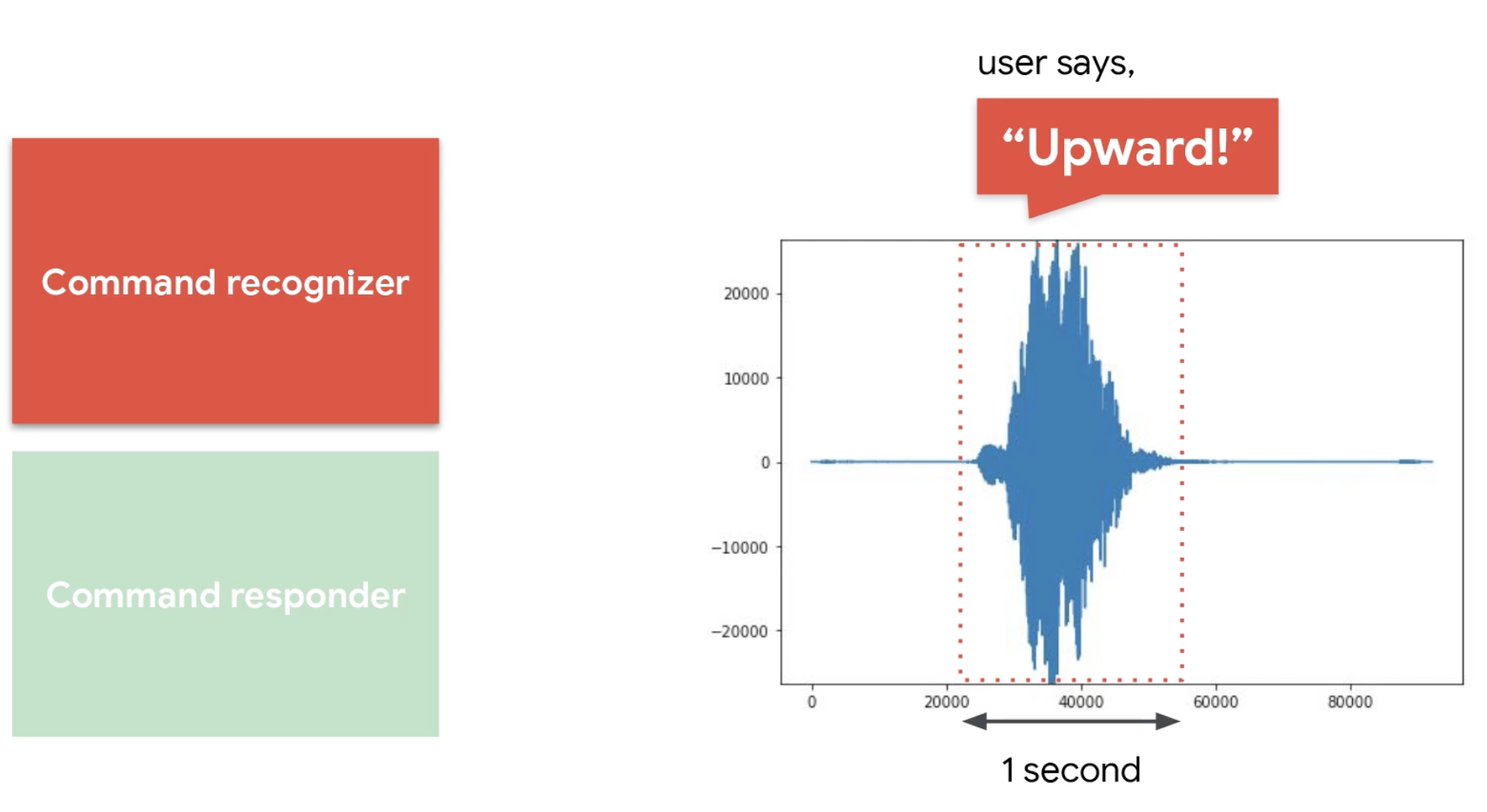

Overview

- Potential problems:

Is it Up?

Or is it Upward?

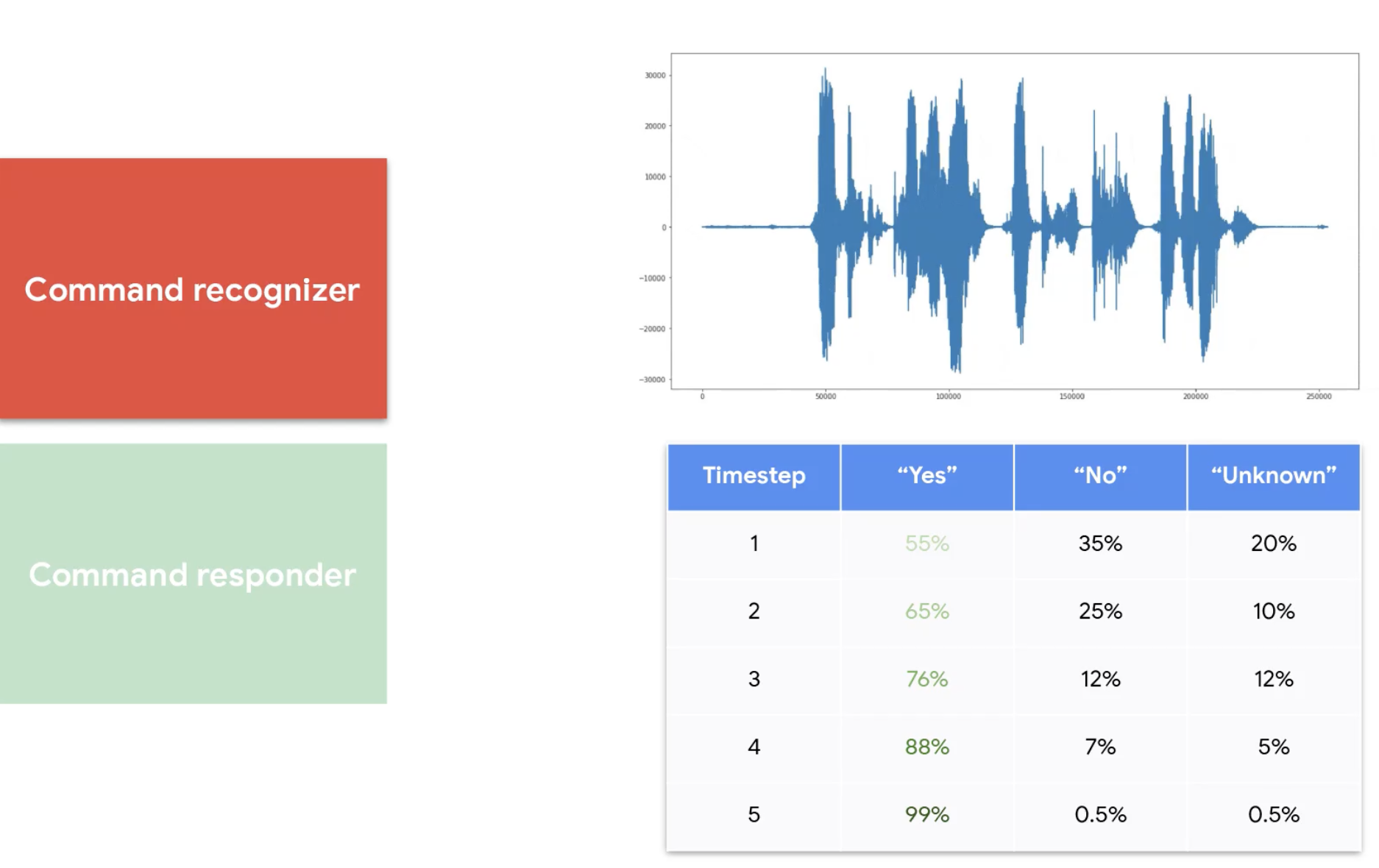

Process Latest Results

- A solution to the false positive problem

-

micro_speech.ino-

void loop():recognizer->ProcessLatestResults

-

- Calculate confidence score

Respond to Command

-

micro_speech.ino-

void loop():RespondToCommand

-

- Configure Lights

- Use

TF_LITE_REPORT_ERRORto print out logs

Deployment

Preparation

- Make sure that you used https://netron.app to identify and modify the Ops

- Use the Pretrained Notebook to convert the tflite model file into

.ccformat. - Open and copy the

.ccformat to replace the byte array of the model inmicro_speech. - Save

micro_speechas a new project on Arduino.