Machine Learning Paradigm

Overview and motivation

Example

- A new paradigm for programming

- Explicitly coding a solution vs Implicitly learning a solution

Example: Explicit coding: pong

- It is how we do it since the beginning of time!

- The ball moves along a path

- Angle/velocity

- The ball hits

- A paddle or a wall

- Change of angle/velocity

- We can code these behaviors based on physical rules + game rules

- Rules are predetermined by the programmer, then coded and tested. Everything needs to be figured out in advance.

- Complexity scale with code amount

Explicit coding

- Powerful but can be limited

Example: Explicit coding: activity detection

- Write an app that uses sensors on a phone or a watch or something else to determine a person’s activity.

- Use the data about their speed and write a rule that determines if the speed is below a certain amount, then they’re probably walking.

=== “Walking” - If less than 4 miles an hour, then they are walking.

1

<details class="details details--success" data-variant="success"><summary>Pseudocode</summary>

1

2

if speed < 4.0:

print("Walking")

</details> === “Running”

1

- If more than 4 miles an hour, then they are running.

1

<details class="details details--success" data-variant="success"><summary>Pseudocode</summary>

1

2

3

4

if speed < 4.0:

print("Walking")

else:

print("Running")

</details> === “Biking”

1

2

3

- If more than 4 miles an hour but less than 12,

then they are running.

- Otherwise, they are biking

1

<details class="details details--success" data-variant="success"><summary>Pseudocode</summary>

1

2

3

4

5

6

if speed < 4.0:

print("Walking")

elif speed < 12.0:

print("Running")

else

print("Biking")

</details> === “Playing soccer”

1

- ???

1

<details class="details details--default" data-variant="default"><summary>Failure: Pseudocode</summary>

1

2

3

4

5

6

if speed < 4.0:

print("Walking")

elif speed < 12.0:

print("Running")

else

print("Biking")

</details>

- It is challenging to write rules for complex problems

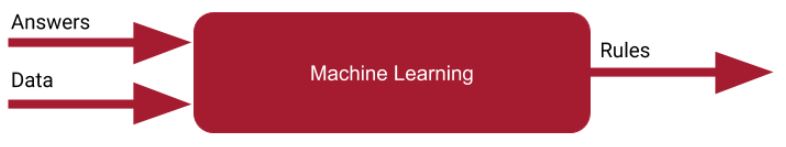

Implicit learning

Machine Learning Paradigm

- In a nutshell:

- Make a guess about the relationship between the data and its labels

-

low speed+long distance or short distance+along road+few stops=walking -

low speed+long distance+on a field+regular stops=golfing -

low or high speed+long distance+enclosed area=soccer

-

- Measures how good or how bad that guess is.

- Terminology:

loss - Higher loss implies lower accuracy.

- Measure the results of your guess,

- Use the data from the accuracy measurement to estimate next guess, optimizing based on what you already know.

- Terminology:

- Repeat the process

- Assumption: each subsequent guess gets better than the previous one

- Make a guess about the relationship between the data and its labels

Example: Relationship between two sets of numbers

- X: $-1,0,1,2,3,4$

- Y: $-3,-1,1,3,5,7$

Question: First guess

- $x_1=-1$ and $y_1=-3$

- $y_1 = 3{x}_1$

- $Y = 3X$

- Guess: $-3,0,3,6,9,12$

- Expected: Y: $-3,-1,1,3,5,7$

Question: Second guess

- $x_1=-1$ and $y_1=-3$

- $y_1 = 3x_1$

- $x_2=0$ and $y_1=-1$

- $y_2 = x_2 - 1$

- $Y = 3X - 1$

- Guess: $-4,-1,2,5,8,11$

- Expected: Y: $-3,-1,1,3,5,7$

Third guess

- $x_1=-1$ and $y_1=-3$

- $y_1 = 3x_1$

- $x_2=0$ and $y_1=-1$

- $y_2 = x_2 - 1$

- $x_3=1$ and $y_3=2$

- $y_3 = 2x_3$

- $Y = 2X - 1$

- Guess: $-3,-1,1,3,5,7$

- Expected: Y: $-3,-1,1,3,5,7$

Measure accuracy

Setting up conda environment

python linenums="1" conda create -n tf python=3.12 conda activate tf pip install --upgrade pip pip install tensorflow pip install opencv-python scipy pooch matplotlib jupyter ipykernel

Example: Coding hands on

- Bring up a Jupyter notebook

- Run the following code segment in a cell and monitor different combinations of

wandbto observe the loss value

python linenums="1" --8<-- "docs/csc581/lectures/codes/02-ml-paradigm/exploring_loss.py"

How good (or bad) are your guesses?

- We want to have a way to measure the loss values and their aggregation.

- Account for negative value (over/under guess)

python linenums="1" --8<-- "docs/csc581/lectures/codes/02-ml-paradigm/loss_calculation.py"

Loss function/Cost function

Example: Mean Squared Error (MSE)

$J = \frac{1}{n}\sum(actual-predicted)^2$

- Given the following

- Set of X: $x_0,x_1,…,x_n$

- Set of Y (actual): $y_0,y_1,…y_n$

- A linear regression function that try to estimate Y from X: $Y=mX + c$

Example: MSE loss function for linear regression

$J = \frac{1}{n}\sum^{n}_{i=0} (y_i - (mx_i+c))^2$

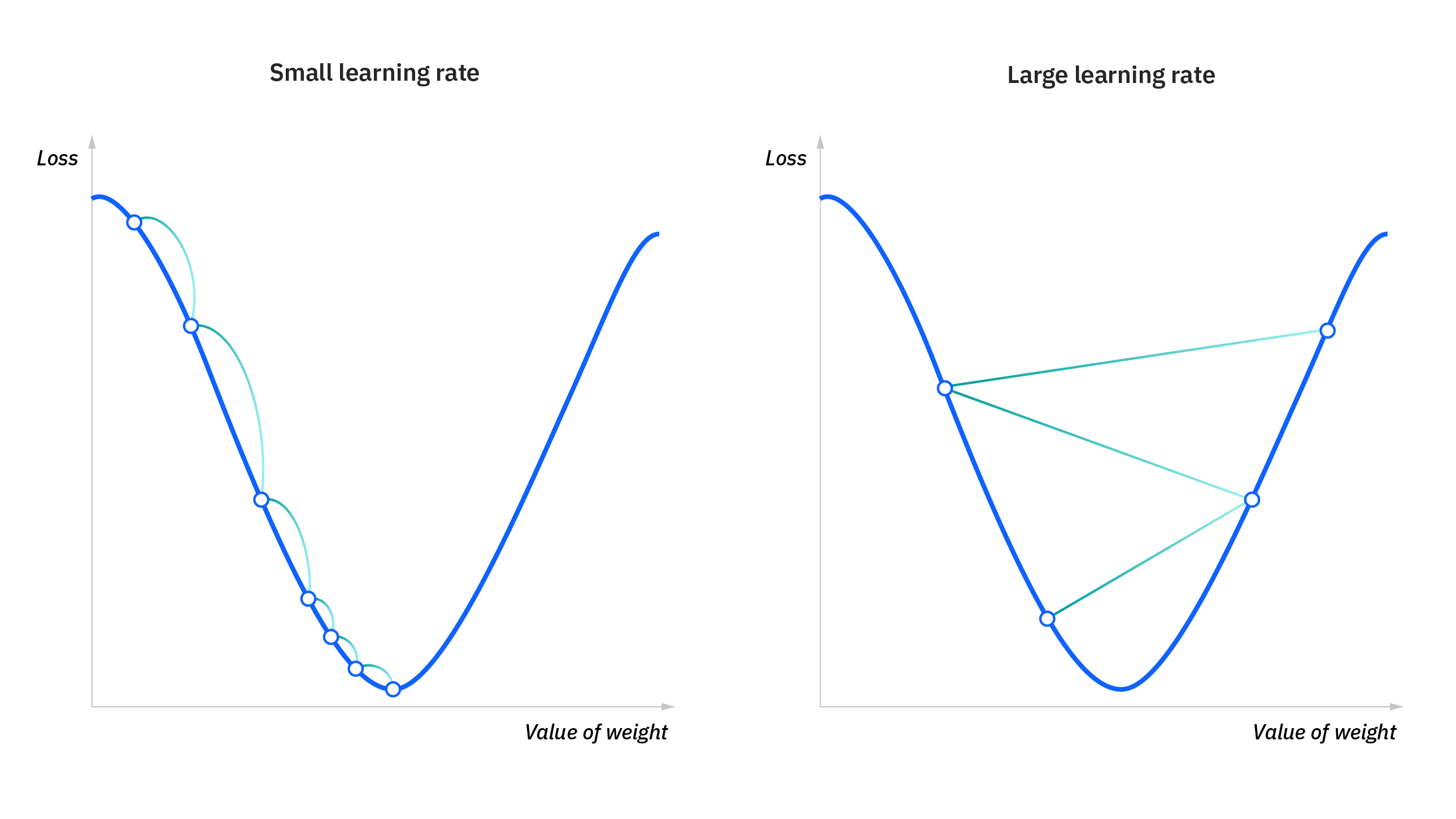

Gradient descent

- Gradient: measure of change in all the weights (m and c in the case of linear regression) with regard to change in error.

- Slope of a function

- Gradient descent:

- Iterative function that applies a predefined rate of change (learning rate) to m and c until a perceived minimal loss is realized.

python linenums="1" --8<-- "docs/csc581/lectures/codes/02-ml-paradigm/3d_loss.py"

Example: Gradient descent for linear regression

- $D_m = \frac{1}{n}\sum^{n}_{i=0} 2(y_i - (mx_i+c))^2(-x_i)$

- $D_c = \frac{-2}{n}\sum^{n}_{i=0} (y_i - (mx_i+c))$

- L = learning rate < 1

- $m = m - LD_m$

- $c = c - LD_c$

Example: Gradient descent in tensorflow

- Download and launch the following notebook

- Change the learning rate and observe how that change the loss values

Introductory Neural Network

Preparation

- In the previous lecture, I showed the installation of in-person Anaconda/tensorflow

Alternative: Google Colab

- Visit https://colab.research.google.com/

- Sign in with your Gmail account, or with your West Chester University account (works for Google)

- Click

Fileand selectNew notebook in Drive

Loading libraries

- In the first cell, run the following:

python linenums="1" import sys import numpy as np import tensorflow as tf

Setup a simple neural network model

- In the second cell, run the following:

```python linenums=”1” model = tf.keras.Sequential([tf.keras.layers.Dense(units=1, input_shape=[1])])

model.compile(optimizer=’sgd’, loss=’mean_squared_error’)

xs = np.array([-1.0, 0.0, 1.0, 2.0, 3.0, 4.0], dtype=float) ys = np.array([-3.0, -1.0, 1.0, 3.0, 5.0, 7.0], dtype=float)

model.fit(xs, ys, epochs=500)

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

- Line 1 defines a very simple neural network model.

- `units= 1`: Dimensionality of the output space

- `input_shape=[1]`: Dimensionality of the input data

- We're training a neural network on single x's to predict single y's.

<details class="details details--default" data-variant="default"><summary>Example: A neural network</summary>

<figure>

<picture>

<!-- Auto scaling with imagemagick -->

<!--

See https://www.debugbear.com/blog/responsive-images#w-descriptors-and-the-sizes-attribute and

https://developer.mozilla.org/en-US/docs/Learn/HTML/Multimedia_and_embedding/Responsive_images for info on defining 'sizes' for responsive images

-->

<source class="responsive-img-srcset" srcset="/assets/img/courses/csc574/02-ml-paradigm/nn-480.webp 480w,/assets/img/courses/csc574/02-ml-paradigm/nn-800.webp 800w,/assets/img/courses/csc574/02-ml-paradigm/nn-1400.webp 1400w," type="image/webp" sizes="95vw" />

<img src="/assets/img/courses/csc574/02-ml-paradigm/nn.jpg" width="50%" height="auto" data-zoomable="" loading="lazy" onerror="this.onerror=null; $('.responsive-img-srcset').remove();" />

</picture>

</figure>

<ul>

<li>Hidden Layer 1: <code class="language-plaintext highlighter-rouge">units=4</code></li>

<li>Hidden Layer 2: <code class="language-plaintext highlighter-rouge">units=4</code></li>

<li>Output Layer: <code class="language-plaintext highlighter-rouge">units=1</code></li>

</ul>

</details>

- Line 2 compiles the model

- Optimizer is defined as `sgd` - Stochastic Gradient Descent.

- Loss function is `mean_squared_error` - MSE.

- Lines 5 and 6 define the X and Y arrays to train the models.

- The fitting process runs 500 times (500 epochs)

- Each epoch is a step:

- Guess

- Measure the loss

- Optimize and repeat

```python linenums="1"

print(model.predict(np.array([10.0])))

- By providing an array of test inputs, we can observe the predicted outputs

python linenums="1" print(model.predict(np.array([10.0, 11.0])))

Common Layer Type

- Dense Layer: neurons from previous layer fully connected to neurons in the next layer

- Convolutional Layer: contain filters that can be used to transform data

- Recurrent Layer: allow learning about relationship between data points in a sequence.

Question: Exercise

- Replace

SHAPEandLOSSwith relevance values for the following segment of code, then run it in a new cell

```python linenums=”1” import sys

import numpy as np import tensorflow as tf import matplotlib.pyplot as plt from tensorflow.keras import Sequential from tensorflow.keras.layers import Dense

predictions = [] class myCallback(tf.keras.callbacks.Callback): def on_epoch_end(self, epoch, logs={}): predictions.append(model.predict(xs)) callbacks = myCallback()

We then define the xs (inputs) and ys (outputs)

xs = np.array([-1.0, 0.0, 1.0, 2.0, 3.0, 4.0], dtype=float) ys = np.array([-3.0, -1.0, 1.0, 3.0, 5.0, 7.0], dtype=float)

SHAPE = #YOUR CODE HERE# LOSS = #YOUR CODE HERE#

model = Sequential([Dense(units=1, input_shape=SHAPE)]) model.compile(optimizer=’sgd’, loss=LOSS) model.fit(xs, ys, epochs=300, callbacks=[callbacks], verbose=2) EPOCH_NUMBERS=[1,25,50,150,300] # Update me to see other Epochs plt.plot(xs,ys,label = “Ys”) for EPOCH in EPOCH_NUMBERS: plt.plot(xs,predictions[EPOCH-1],label = “Epoch = “ + str(EPOCH)) plt.legend() plt.show() ```