Distributed Machine Learning with Spark

Distributed Machine Learning with Spark

Application

Example: Spam filtering

| viagra | learning | the | dating | nigeria | spam? | |

|---|---|---|---|---|---|---|

| X1 | 1 | 0 | 1 | 0 | 0 | $y_1$ = 1 |

| X2 | 0 | 1 | 1 | 0 | 0 | $y_2$ = -1 |

| X3 | 0 | 0 | 0 | 0 | 1 | $y_3$ = 1 |

- Instance spaces X1, X2, X3 belong to set X (data points)

- Binary or real-valued feature vector X of word occurrences

-

dfeatures (words and other things, d is approximately 100,000)

- Class Y

- Spam = 1

- Ham = -1

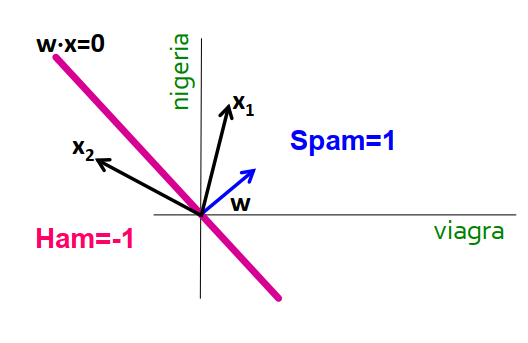

Linear models for classification

Overview

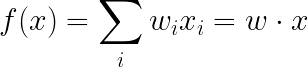

\[f(x) = \begin{cases} +1 & \text{if } w_{1}x_{1}+w_{2}x_{2}+...+w_{d}x_{d} \ge \theta \\ -1 & \text{otherwise} \end{cases}\]- Vector $X_j$ contains real values

- The Euclidean norm is

1. - Each vector has a label $y_j$

- The Euclidean norm is

- The goal is to find a vector W = ($w_1$, $w_2$, …, $w_d$) with $w_j$ is a real number such that:

- The labeled points are clearly separated by a line:

- Dot is spam, minus is ham!

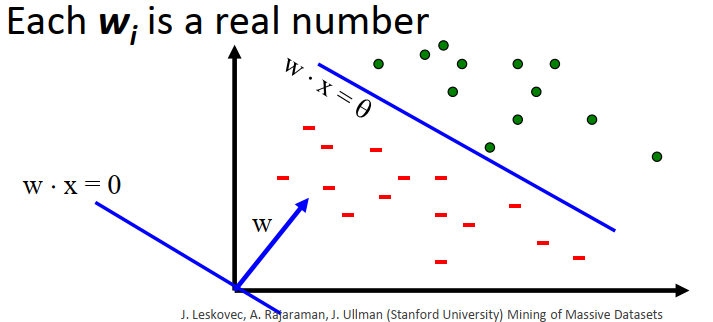

Linear classifiers

- Each feature

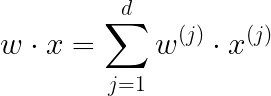

ias a weight w_i$ - Prediction is based on the weighted sum:

- If f(x) is:

- Positive: predict +1

- Negative: predict -1

Support Vector Machine

Overview

- Originally developed by Vapnik and collaborators as a linear classifier.

- Could be modified to support non-linear classification by mapping into high-dimensional spaces.

- Problem statement:

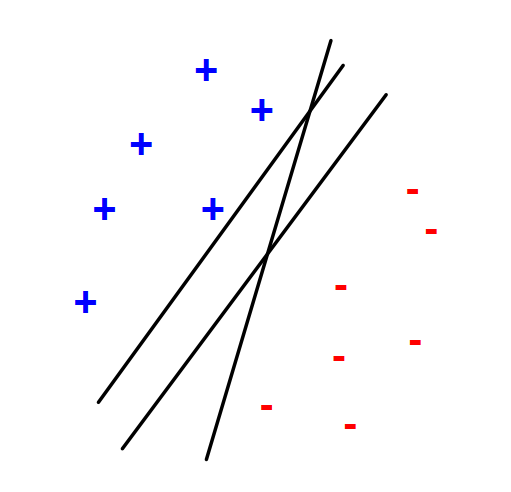

- We want to separate

+from-using a line. - Training examples:

- We want to separate

1

- Each example `i`:

1

- Inner product:

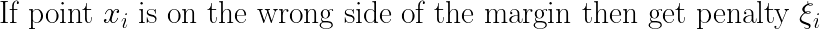

- Which is the best linear separate defined by w?

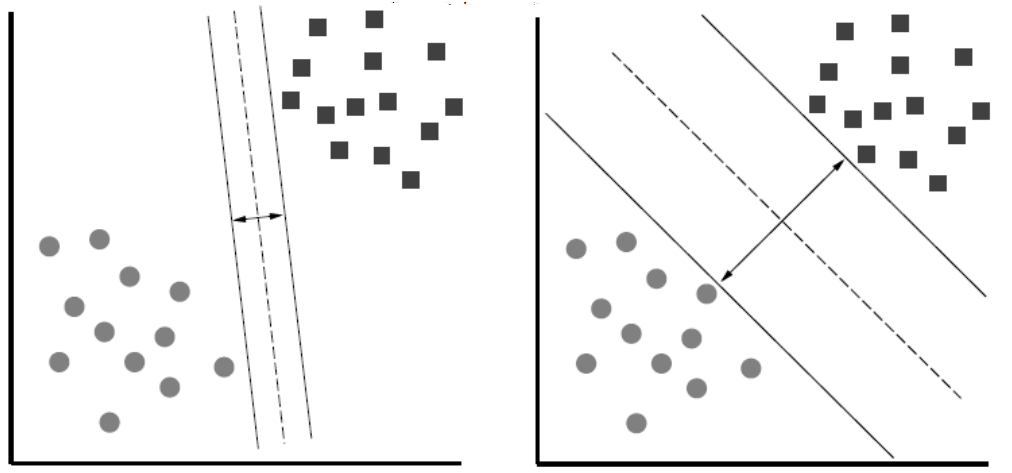

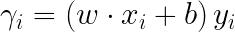

Support Vector Machine: largest margin

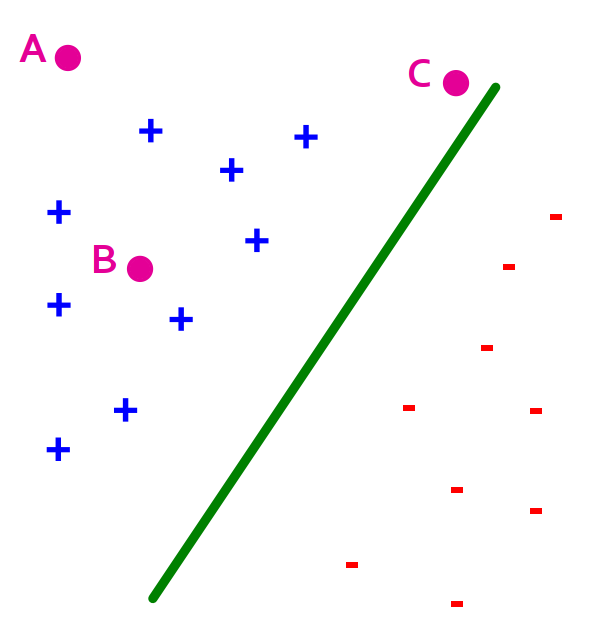

- Distance from the separating line corresponds to the confidence of the prediction.

- For example, we are more sure about the class of

AandBthan ofC.

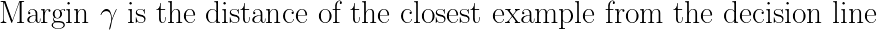

- Margin definition:

- Maximizing the margin while identifying

wis good according to intuition, theory, and practice.

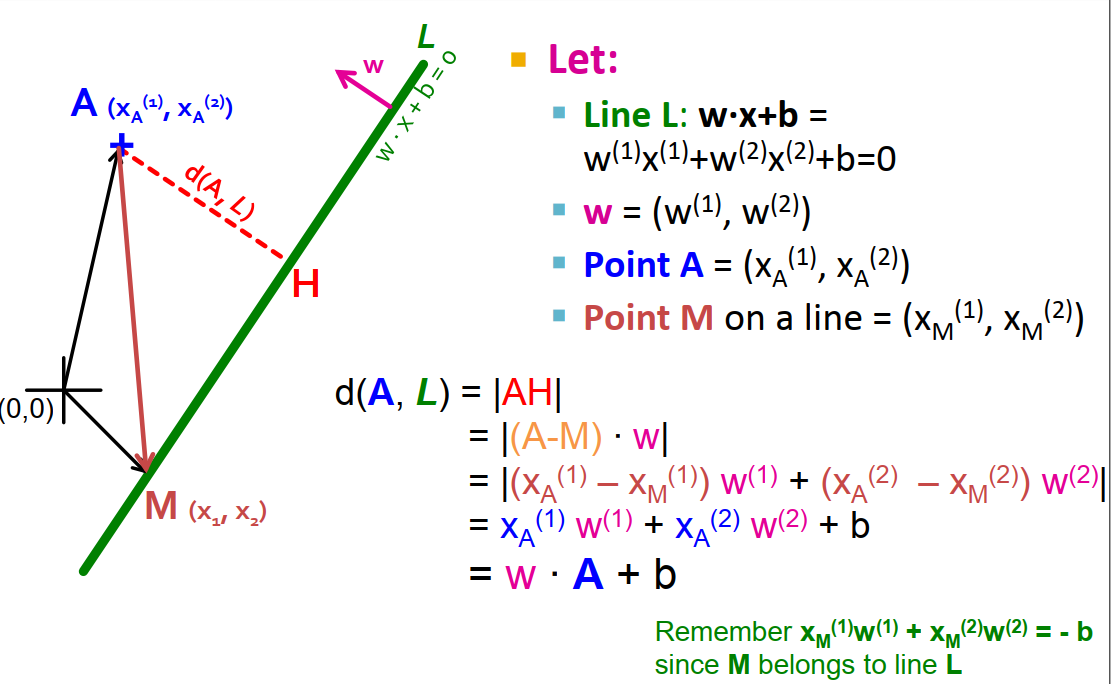

- A math question: how do you narrate this equation?

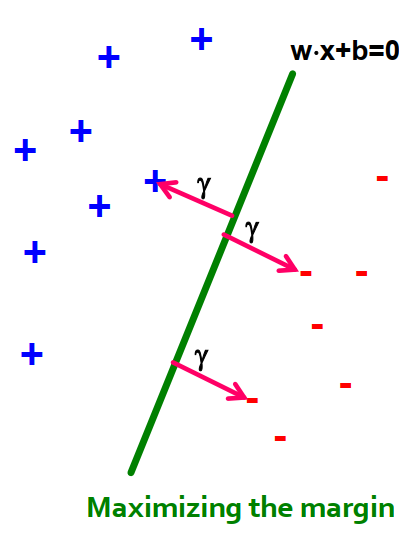

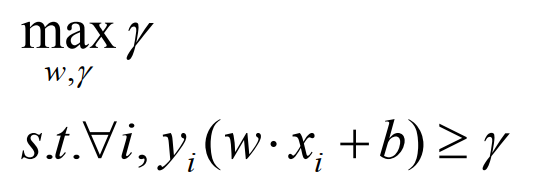

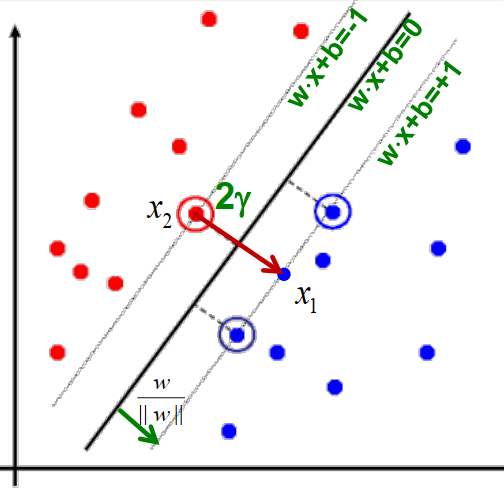

Support Vector Machine: what is the margin?

- Slide from the book

- Notation:

-

Gammais the distance from point A to the linear separator L:d(A,L) = |AH| - If we select a random point M on line L, then d(A,L) is the projection of AM onto vector

w. - Project

- If we assume the normalized Euclidean value of

w,|w|, is equal to one, that bring us to the result in the slide.

-

- In other words, maximizing the margin is directly related to how

wis chosen. - For the ith data point:

.

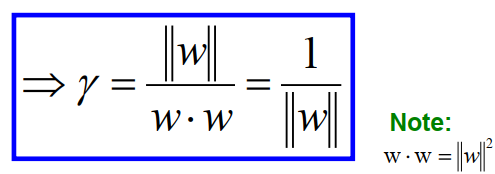

Some more math …

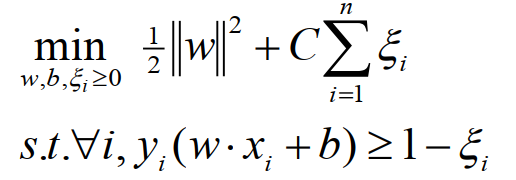

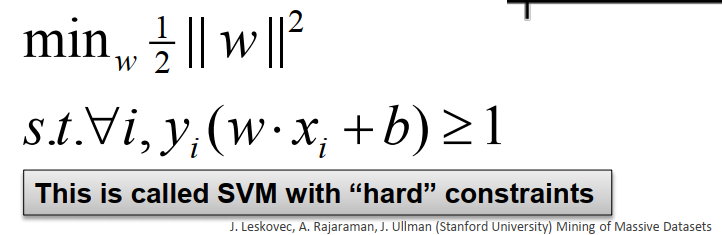

- After some more mathematical manipulations:

- Everything comes back to an optimization problem on

w:

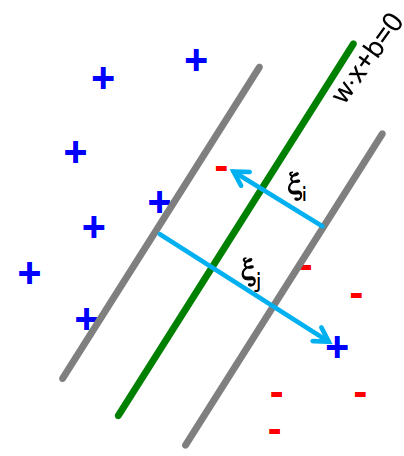

SVM: Non-linearly separable data

- For each data point:

- If margin greater than 1, don’t care.

- If margin is less than 1, pay linear penalty.

- Introducing slack variables: