Definition

- Must involve ALL processes within the scope of a communicator.

- Unexpected behavior, including programming failure, if even one process does not participate.

- Types of collective communications:

- Synchronization: barrier

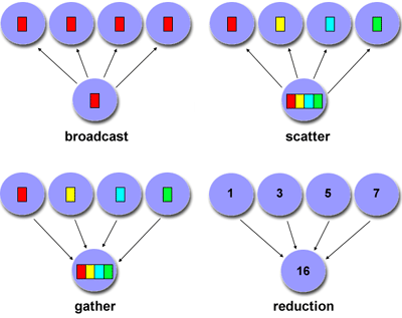

- Data movement: broadcast, scatter/gather

- Collective computation (aggregate data to perform computation): Reduce