Hurricane Sandy (2012)

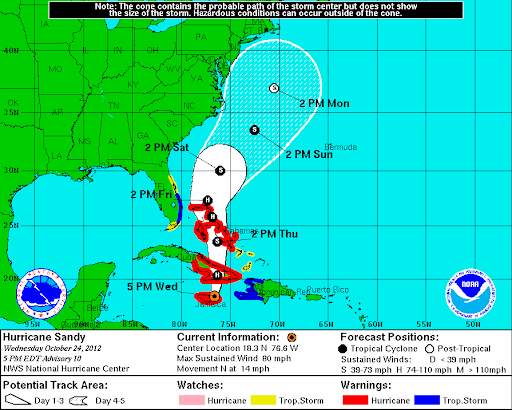

- the deadliest and most destructive, as well as the strongest hurricane of the 2012 Atlantic hurricane season,

- the second-costliest hurricane on record in the United States (nearly $70 billion in damage in 2012),

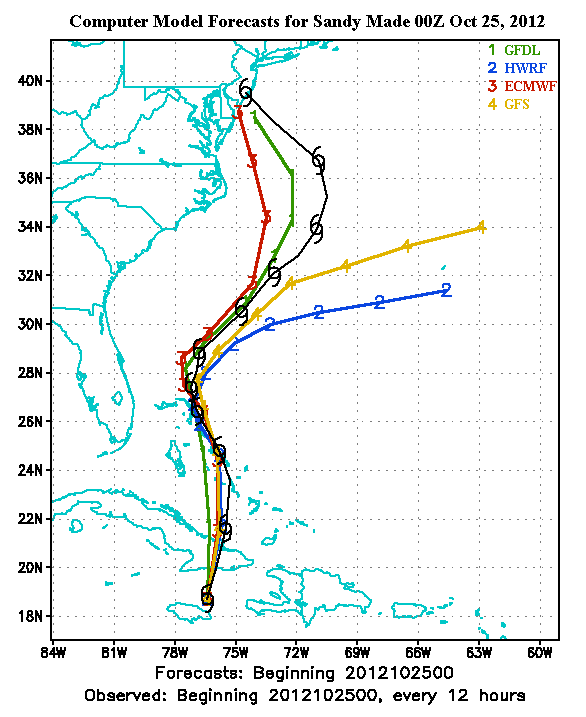

- affected 24 states, with particularly severe damage in New Jersey and New York,

- hit New York City on October 29, flooding streets, tunnels and subway lines and cutting power in and around the city.